By TREVOR HOGG

By TREVOR HOGG

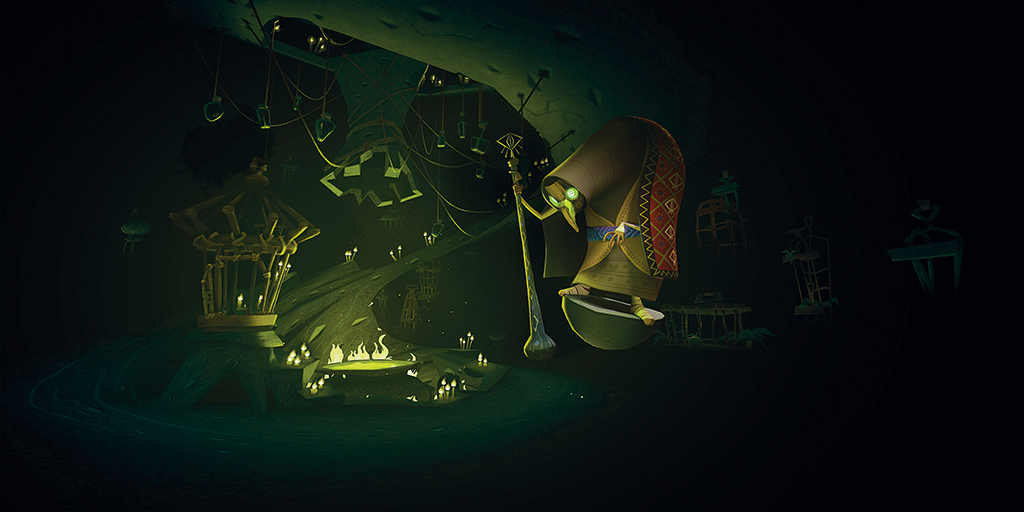

In Slavic folklore, a supernatural being associated with wildlife appears as a deformed old woman and lives in a hut; she provides the inspiration for Baba Yaga, an interactive VR experience produced by Baobab Studios in partnership with Conservation International and the United Nations ActNow campaign that is available exclusively on Oculus Quest.

As settlers encroach a rainforest, their chief becomes deathly ill, leading her two children to embark on a journey to find a cure in the form of the mysterious Witch Flower. Serving as the writer, director and cinematographer on the project is Eric Darnell, Co-Founder and Chief Creative Officer of Baobab Studios, with the voice cast consisting of Glenn Close, Kate Winslet, Jennifer Hudson and Daisy Ridley. “While it’s about a mean old witch who lives in the forest and maybe eats children,” states Darnell. “Baba Yaga is also a story about recognizing our connection with the natural world. In doing so, perhaps we’ll be more inclined to live in harmony with it.”

Since the release of Invasion! in 2016, the independent interactive animation studio established by Darnell, CEO Maureen Fan and CTO Larry Cutler has gone on to win six Emmy Awards and two Annies. “It has been an amazing journey for us,” remarks Darnell. “When we made Invasion! it became clear to us that VR is not like cinema. It’s a different medium. We recognized, which is obvious now, that our superpower is immersion. You can look around, but if you’re running in real-time then we can have interactivity. Characters can respond to what you do. It got us to think about how we can tell stories that make the viewer the main character and feel that their choices are meaningful and matter. I like to think of story as being primarily about characters making big decisions that reveal something about themselves.”

“What I’m most interested in is how people react and respond to the opportunity to go down more than one path, and do people find that compelling enough to go back and try it again? That’s the hope. This is the most complex interactive project that we’ve done, particularly when it comes to giving the viewer the opportunity to make their own decisions about how the story is going to play out.”

—Eric Darnell, Co-Founder and Chief Creative Officer, Baobab Studios

Allowing viewers to impact the narrative with their choices means that multiple outcomes need to be produced. “It can get complicated,” admits Darnell. “We explored that more in Baba Yaga, where there is more flexibility in how the story can flow and finish. But the other thing that we have realized over these last five years is sometimes it’s the little things that make the viewer feel engaged and connected with the world. In Baba Yaga, there is a moment where another character offers you a lantern, and more than one person has commented on how it felt magical that she reaches out to you, you raise your hand, and suddenly you have this lantern, and are able to point it around to see the dark forest better.”

Machine learning has enhanced the interactivity and immersion as characters can believably respond to the actions of the viewer. “Bonfire was the first time that the viewer was the main character and has to interact with this alien creature that is hiding out there in the dark jungle,” states Darnell. “You can pick up a piece of food and offer it to Pork Bun or throw a flaming log at him. Depending on what you do, the character has to be always ready to respond in a way that makes sense. If you throw the flaming log, it might run away, hide for a minute and come back out when it starts to feel safe again. We have to find ways to not only be able to react to the range of things that the audience can do, but react to a frequency of doing certain things. When you combine that with trying to find ways for the AI system to work with handcrafted animation, for it to pick from this big library of potential actions, insert them into the scene, and actually modify everything at the same time because it matters where the character is, how they’re oriented to the viewer and where the object of its desire is, it became a complex problem that ultimately paid off for us.”

As with the rest of the world, the pandemic caused Baobab Studios to adopt a remote workflow for the production. “Our team had become international and diverse already,” explains Larry Cutler, CTO at Baobab Studios. “For Baba Yaga, co-director Mathias Chelebourg lives in France and so did our original production designer, Matthieu Saghezchi. One of our lead animators was in Poland and our modeler was in the Philippines. We had cracked the challenge of how to build toolsets that enable you to review dailies in VR with everyone present, so when we decided to have everyone switch to being remote in early March 2020, it didn’t change our process that much. There were certain things that we said like, ‘The only way you could actually shoot all of the 2D footage [for the cinematic version] in VR was if we were all together in the screening room.’ But we were able to do that remotely, which was crazy.”

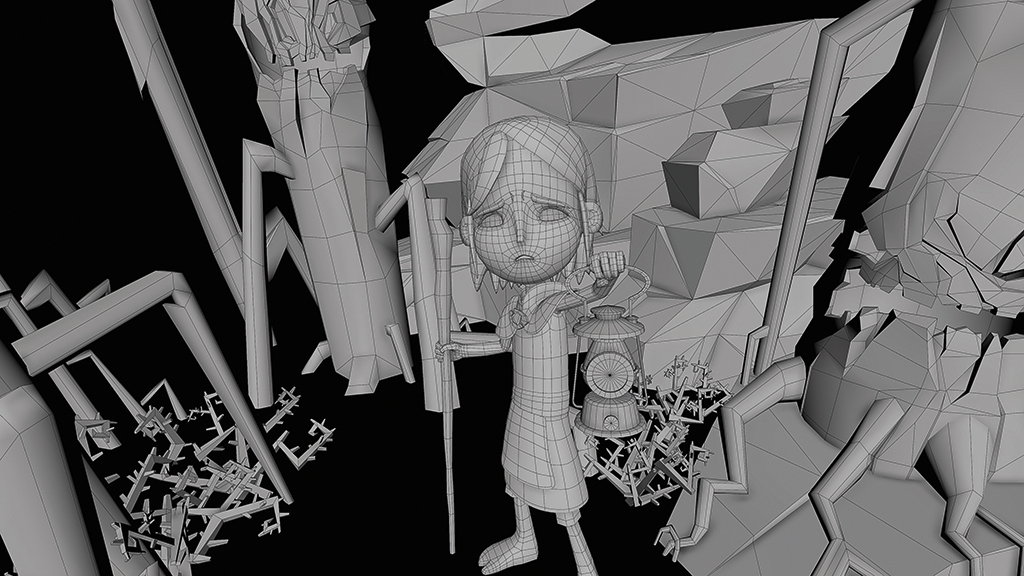

Working within a new medium meant that Baobab Studios had to develop a holistic toolset called Storyteller. “All of our animation is done in Maya and the real-time work in Unity,” states Cutler. “We built an entire toolset called Storyteller on top of those amazing platforms, as well as things that are standalone, such as our own shot database.” Human characters make their debut in Baba Yaga. “Over time,” adds Cutler, “the animation industry has been able to do convincing humans, but they involve simulated clothing, hair and complex rigs. To do that running at 72 fps, rendering both eyes and having systems that are reactive, was daunting for us but exciting to take on for Baba Yaga. We had to have clothing that was believable, hair, nuanced facial performances and great animation: all of these things had to come together.

We built our own subdivision surface rendering system within Unity, so you could get sharp creases around the eyebrows and nose of Magda [Daisy Ridley]. Eric wanted the 2D version to be still be told from a first-person emotional POV. That meant it had to handheld and not feel like a single-camera move. As a result, we built a whole toolset for Eric to shoot all of the camerawork himself in VR.”

Breaking the fourth wall between the characters and viewer was an area of concern for Ken Fountain, Animation Supervisor at Baobab Studios. “We had to come up with some cool workflow techniques in order to allow what we were doing to connect with the automated look-at system that we had developed.

We were worried about the tiniest details of eye convergences and micro-expressions in facial features that you normally don’t spend a lot of time thinking about because you’re not trying in a movie to persuade an audience to actually do something. Often times in animation, we simplify in order to get the point across more clearly. When you have a character that is two feet away from your face, you need the complexity, so knowing the musculature of the face and making sure that the animators are all using it the same way does add a level of complications to it.

“We had to design our workflow based on branching narratives,” explains Fountain. “Our scene structure had to develop its own language. What we would call different clips of animation, how we would group things together as scenes and pieces of that scene and layers of that scene. The joy of working in a new technology is nobody has written the workflow yet. What makes a difference is how well you have mapped out those ‘what if’ situations ahead of time, because AI is not yet sophisticated enough to be able to do a lot of the heavy lifting. A lot of the heavy lifting has to happen in planning the scene and saying, ‘We’ve tested this with some user tests and have discovered that they want to do this.’ We need to plan the branching action to be able to accommodate what the user wants to do or what they might choose.” Maya has the ability to layer animation. “We don’t want this to look like a bunch of clips stitched together,” adds Fountain. “It needs to look like a hand-animated performance everywhere. We worked with our engineers to come up with great blending strategies, and ways to layer parts of animation to hide transitions and make things feel more continuous.

How could we harness what Maya can do and send that to Unity in a way that Unity can use it modularly and be able to blend things together? That was through processes that came heavily across the last three productions.”

Impacting the effects style was the pop-up storybook aesthetic of the prologue and epilogue, as well as the decision to adopt a stop-motion approach towards the characters. “Because the whole world of Baba Yaga feels hand-drawn, a lot of our effects are hand-drawn,” notes Nathaniel Dirksen, VFX Supervisor and Head of Engineering at Baobab Studios. “Rather than using Houdini for effects, we would use Procreate. We actually had an animator draw fire on their iPad, animate fire in Procreate or Blender, and do 2D effects. We hadn’t done 2D effects and brought them into VR before. The Witch Flower had a lot of elements going into it, so we used Houdini to maximize the art direction in order to get the effect that we wanted. In the 2D version, we redid the Witch Flower as a 2D effect that was hand-drawn. In the VR piece, the Witch Flowers are a lot further away from the action, so the effect that we had done didn’t hold up well when we got them into the 2D version [where they appear much closer to the camera].”

The Oculus Quest is an untethered headset that has a GPU associated with a smartphone rather than a powerful computer. “If you give the user the ability to shine a light around the scene, you’ve just made life harder, but it is cool, so you do it anyway!” laughs Dirksen. “Doing real-time lighting is more expensive computationally, so on the Quest, which has limited rendering power, we had to be careful about how much extra computational complexity that we had to add in. We wanted to have this moment of Magda giving you the lantern to rope you into going onto the journey with her.

The lighting setups had to be precisely scripted. “The way that things turn on and off is carefully choreographed to make sure that it works,” Dirksen adds. “The forest has a cool, blue-ish, layered background, giving this notion that it goes off into the distance. That starts to fade out and be simplified once the flytraps come out. We have to make sure that we’re not having too many expensive things onscreen at the same time. You set it up in the beginning, establish that it’s there, people get a feeling for the forest, darken things down and focus on a particular area of the scene. Choices like that are helpful in terms of focusing the viewer but also keeping our render budget in line.”

Orchestrating the ADR sessions turned out to be extremely complicated because of the safety protocols caused by coronavirus. “Glenn Close, who plays the mother, already had all of her dialogue recorded, but we still needed to get Kate Winslet, Jennifer Hudson and Daisy Ridley to do all of their voice recordings,” recalls Scot Stafford, Founder and Creative Director of Pollen Music Group. “None of them had home studios. We found a pandemic-proof studio [for Daisy Ridley in London], a pandemic-proof remote recording operation that goes into people’s homes, builds it out and then takes it all down [for Kate Winslet], and an in-house solution in Chicago for Jennifer Hudson [with the engineer who records a lot of her music]. There were several takes that weren’t usable, but we got all of the good ones by the skin of our teeth.”

The sound design was built around the idea that the story takes place in an Arctic rainforest. “I thought that concept was fascinating,” remarks Stafford. “I was inspired by some of the otherworldly sounds that you hear as the ice cracks and melts on a frozen lake. There are these crazy squeaks, swoops and sign waves. I created a creature out of those noises that would sound like a birdcall. You hear it three times very briefly. As you progressed there were different states of immersion, from being stylized stagecraft, to being intimate and domestic, to being immersed in this incredibly powerful forest.”

“Making sure that different scenarios actually had a satisfying through line was the biggest accomplishment on this film,” notes Cutler. “All of these other pieces feed into that.”

At the conclusion of Baba Yaga, viewers make a fateful choice which is determined and implemented by the type of hand gestures they decide to utilize. “What I’m most interested in is how people react and respond to the opportunity to go down more than one path, and do people find that compelling enough to go back and try it again?” states Darnell.

“That’s the hope. This is the most complex interactive project that we’ve done, particularly when it comes to giving the viewer the opportunity to make their own decisions about how the story is going to play out.”