By IAN FAILES

By IAN FAILES

Today, virtual production encapsulates so many areas – visualization, real-time rendering, LED wall shoots, simulcams, motion capture, volumetric capture and more. With heavy advances made in processing power for real-time workflows, virtual production tools have exploded as filmmaking and storytelling aids.

The idea, of course, is to give filmmakers and storytellers more flexible ways to both imagine and then tell their stories, and in that way virtual production has no doubt improved creative outcomes.

Via on-the-ground stories told by visual effects, virtual production and virtualization supervisors, we look at examples of where virtual production has provided new options and practical solutions to storytellers and how it has made a clear difference on real productions.

VISUALIZATION IS STORYTELLING

Studios specializing in previs (now more commonly referred to as visualization) have always helped filmmakers shape stories. In the past few years, those same studios have also spearheaded a number of virtual production innovations. Proof Inc., for example, recently added real-time motion capture during its virtual camera (VCAM) sessions. “We can now direct performance and cinematography at the same time,” notes Proof Creative Technology Supervisor Steven Hughes. “The data captured during a VCAM session is quickly passed to the shot creators, who know that the staging and composition are already in line with the director’s vision.”

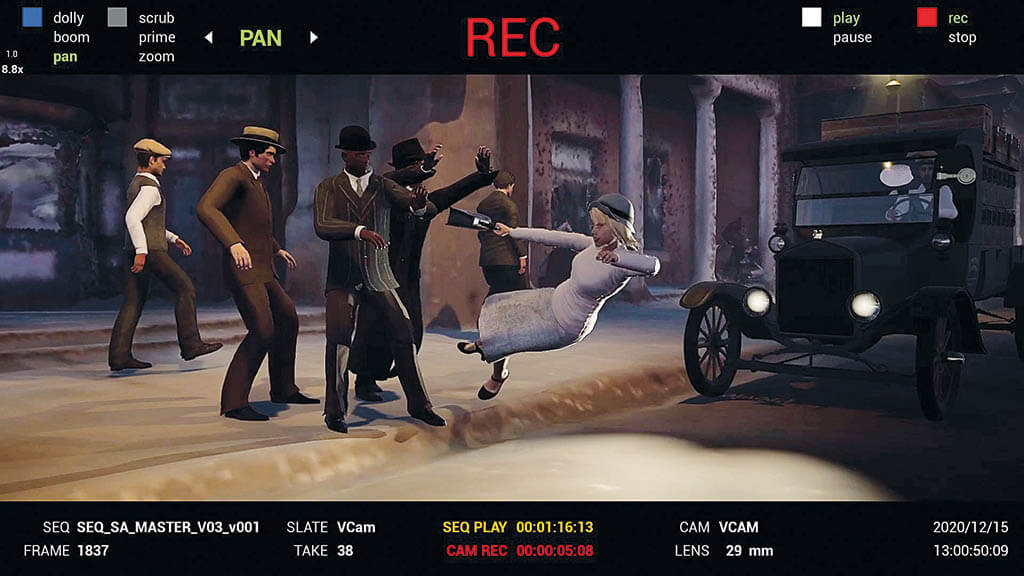

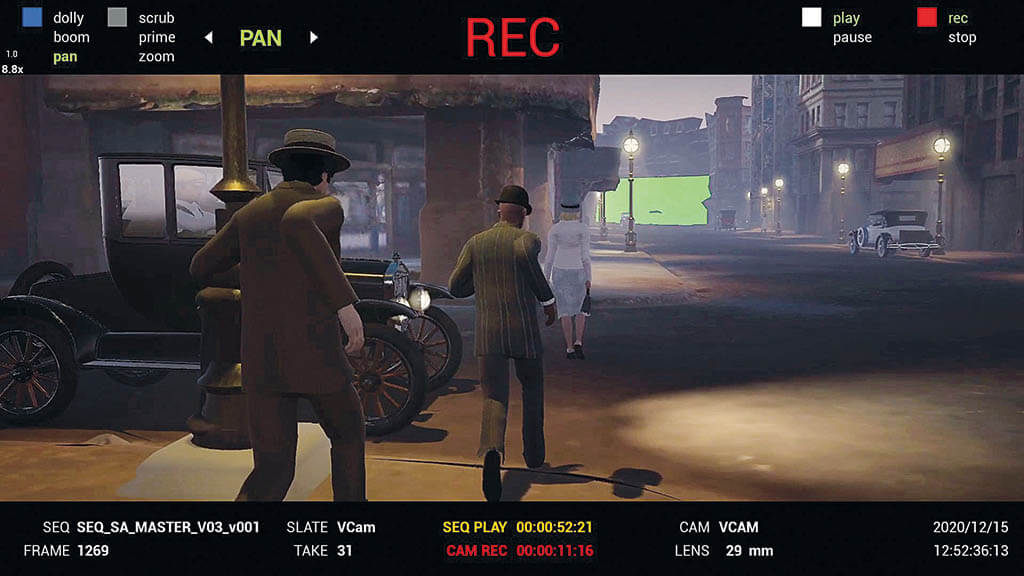

On Amsterdam, Proof’s ‘viz’ work was directly used by the filmmakers for a period New York sequence. It started with a virtual production set build of 1930s New York and then blocking of animation in Maya before moving into Unreal Engine 4. “Then we were ready for our virtual camera scout with Cinematographer Emmanuel ‘Chivo’ Lubezki and VFX Supervisor Allen Maris,” details Proof Previs Supervisor George Antzoulides. “The filmmakers were able to use our virtual sandbox to plan out complex shots and camera movements before filming ever began.”

“With a scene that required visual effects work in nearly every shot, with Proof’s help, we were able to create a master scene file and then using the VCAM, go in and block out the camera moves with Chivo,” says Maris. “This allowed us to figure out the bluescreen details on the main unit set and then to plan the specific plate needs in New York.”

“As the only sequence they were going to previs,” adds Proof Head of Production Patrice Avery, “Allen really wanted to use it to help inform the mood of the overall shoot. He wanted to capture the noir, low-light, period New York feel. It also wasn’t an action scene as much as an accident that just happens that we needed to figure out.”

The result, shares former Proof Inc. Virtual Art Department Lead Kate Gotfredson, now with ILM, was a way to use previs and virtual production to give the filmmakers a way to explore all options. “It was a road map that helped drive the rest of the production.”

GOING FOR NEAR REAL-TIME

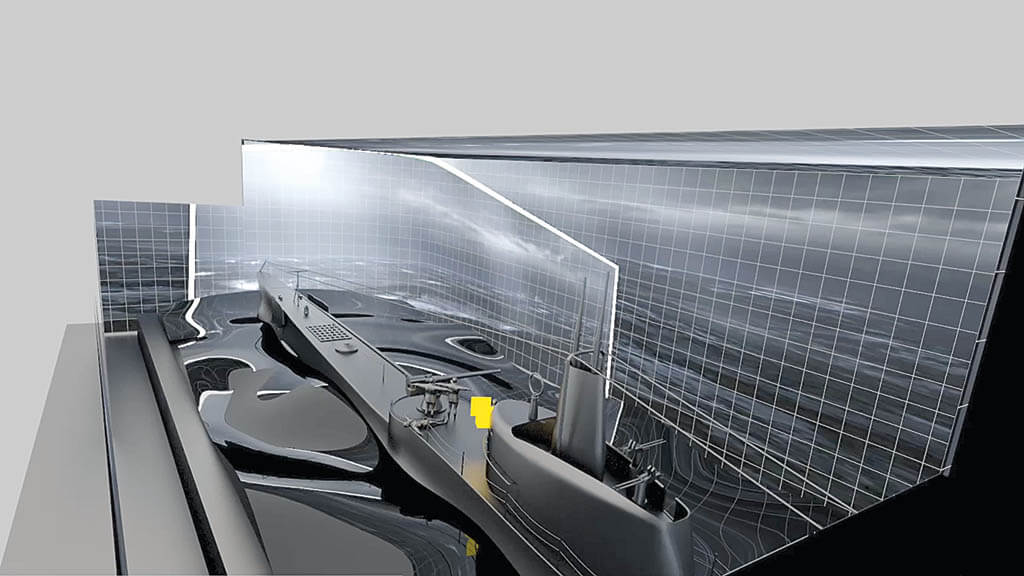

Getting an early sense of what will be in the final frame has become one aim – and benefit – of virtual production. For Edoardo de Angelis’ World War II submarine film Comandante, Visual Effects Designer Kevin Tod Haug enlisted a group of virtual production professionals to build what he describes as a ‘near real-time’ workflow for the film.

These included Foundry with its Unreal Reader toolset and AI CopyCat tool used for real-time on-set rotoscoping and compositing, Cooke with its lens calibration and metadata solutions, in-camera VFX approaches from 80six, High Res and DP David Stump, previs from Unity, and visual effects R&D and finals from Bottleship and EDI.

Previs first proved very effectual in finding storytelling moments. “The previs definitely impacted the way Edoardo shot the movie,” describes Haug. “He had this idea that the camera should feel like a member of the crew on the boat, but mostly that didn’t work so well, especially since a real submarine is quite thin. It became pretty clear that you had to be able to reach out with the Technocrane and shoot scenes ‘off-boat,’ and that’s how we did the shoot.”

Furthermore, the on-set real-time compositing workflow and pre-made virtual environments provided accurate templates of the final shots on the director’s monitor, which, while production was still ongoing in Italy, provided one of the major benefits of adopting a virtual production approach: it allowed for some very advanced rough cuts.

“Everyone’s looking at shots that start to verge on what is usually called post-vis,” Haug says, “and we haven’t even finished production yet, so it’s not final pixels in camera, but by the time Edoardo is done with his director’s cut, he’s going to know what the movie looks like. That’s streets ahead of the old days.”

VIRTUAL PRODUCTION SHOWS ‘WHAT’S GOING TO GO THERE’

Beginning on Robert Zemeckis’ performance capture film A Christmas Carol as a digital effects supervisor, and then working with the director as Production VFX Supervisor on such projects as The Walk, Allied, Welcome to Marwen, The Witches and Pinocchio, Kevin Baillie has continually been exploring the storytelling benefits of virtual production.

“I think Bob Zemeckis has adopted virtual production so enthusiastically over the years because it empowers him to have more control over the filmmaking process and be closer to the filmmaking process while making the film,” says Baillie. “There’s nothing worse for a director than to have to sit there on set and be looking at a big bluescreen and have no idea what’s going to go out there. What virtual production does is it just shows everybody, ‘This is what’s going to go there,’ if you’re using a simul-cam or, say, an LED wall.”

On Pinocchio, Baillie sought to capitalize on numerous virtual production techniques, including virtual real-time environments made in Unreal Engine by Mold3D, previs utilizing those environments by MPC, virtual stages and animation brought together by Halon, simulcam tech from Dimension Studio and set-wide camera tracking by RACELOGIC.

Interestingly, LED walls were not used, although a variation – ‘out-of-view’ LED walls devised by Dimension Studio for interactive lighting – came in handy.

“All of this meant we actually shot and cut together a temp version of almost the entire movie before we hammered a single nail into a real set,” Baillie advises. “And then it meant we had ways to view live-action actors with CG characters during filming. Also, our DP, Don Burgess, could set lighting looks with real-time tools. These real-time and VP tools all meant we could focus on and plan for all the eventualities that were in the movie.”

THE ESSENCE OF A PERFORMANCE

When a life-sized dancing and singing reptile was required for Lyle, Lyle, Crocodile, the filmmakers quickly recognized that telling this story would require some kind of performance capture and virtual production approach for achieving the character in pre-production, production and into post. So, they engaged Actor Capture.

“We presented a solution early on to visualize a scale-accurate 6-foot 1-inch Lyle with an actor of the same height, later portrayed by Ben Palacios,” outlines Motion Capture Supervisor James C. Martin. Palacios wore an inertia suit, head-mounted camera and proxy pieces of tail and jaw during filming.

“We then had to work out how to deliver Lyle in real-time, in camera and with zero percent latency for face, body and hands,” Martin says. “We implemented workflows in both Xsen’s MVN studio and Unreal Engine 4 to achieve the on-screen feed directors Josh Gordon and Will Speck requested.”

Martin suggests that this approach, from a production and storytelling point of view, had several benefits. Firstly, the live-action actors had something to interact with, and then the filmmakers immediately had a performance to review and carry through to post-production.

Ultimately, the impact was to save time and money while still allowing for intricate scenes that would feature a complex CG character. “Hundreds of cleaned takes were provided with accurate time-coded synced data that the animators could review directly in from the VFX witness reference cameras,” Martin adds.

“Actor Capture also provided a way for the directors and VFX Producer, Kerry Joseph, to audition Lyle in the beginning of the film and complete the run of show with visuals to coincide with what was already in editorial. A big cost solution that was fixed in pre-production.”

BLACK ADAM EMBRACES VIRTUAL PRODUCTION

Cinematographer and Virtual Production Supervisor Kathryn Brillhart has spent the past several years immersed in virtual production, managing volumetric capture and VAD teams, working as a cinematographer consulting with studios in the area, directing her own short film that utilizes LED walls and real-time tools, and as Virtual Production Supervisor on Black Adam and the upcoming Rebel Moon and Fallout. That makes her well-placed to engage with the myriad of ways that productions are adopting virtual production, as well as work with a wide range of directors. “The director and content drive what virtual production is on a project,” notes Brillhart, “and the goal is to create workflows that support the story and their creative vision.”

On the production of Black Adam, just some of the VP approaches included: real-time and pre-rendered CG environments for playback on LED walls (produced by UPP, Wētā FX, Scanline and Digital Domain), facial and performance capture, and synchronization between those elements with robotic SPFX rigs and motion bases to emulate flying, helicopters, driving and flybike chases.

“One of the biggest challenges on a VFX-driven project like this is actually capturing as much closeup footage of the actors as possible during production to improve the realism of the shots later in post,” Brillhart says. “It was also important to capture accurate interactive lighting on subjects in-camera and use final pixel ICVFX to make complex VFX shots and make less complex and simple VFX shots possible to capture in-camera.”

“Also,” says Brillhart, “one of the most cutting-edge techniques we used was the combination of real-time environment playback in Unreal Engine displayed on the inside of a small, closed LED volume, with cameras mounted inside the rig at every angle (360 degrees), creating video volumetric capture of ‘The Rock’s’ performance. This process created a super-photorealistic digital puppet of any actor we captured.”

Continues Brillhart, “It was a great collaboration between Eyeline Studios’ technology and Digital Domain’s Charlatan FACS procedure that gave us high-quality digital characters to use. Due to the position of the cameras inside the rig, new camera moves could be created after the capture process as well. Our director, Jaume Collet-Serra, was really open to this process. From prep to production, the use of virtual production techniques ended up benefiting every department on the project.”