By IAN FAILES

By IAN FAILES

Can light fields change visual effects? The answer to that question might still be uncertain, but it is possible they are already changing how immersive experiences are captured and presented to viewers. What impact light fields will have on VFX is a question that has been asked several times in recent years, as an increasing number of academic researchers and companies experiment in the light fields area.

Among the possibilities include new ways of acquiring images, and new approaches to compositing (although the death of greenscreen thanks to light fields may be slightly exaggerated). VFX and CG artists, and researchers working VR and AR, certainly have already found that light fields open up the degrees of freedom a user may experience in immersive experiences.

VFX Voice canvassed a few experts in the field to find out about some of the latest research being done in light fields to see how it might impact visual effects and filmmaking in the near future.

Think of a light field as all the light that goes through a particular area or volume of space. How that quickly becomes important in filmmaking and visual effects is in relation to cameras. A typical camera captures rays of light that enter through its lens. But to capture light fields, multiple lenses generally need to be used.

This ‘array’ of cameras or lenses ultimately gives the viewer a number of vantage points. In processing that imagery – which can be from scores of vantage points – you can ascertain details about the intensity of light, its angular direction and more. It also means things can be done with the captured light field that cannot typically be done with 2D images or video.

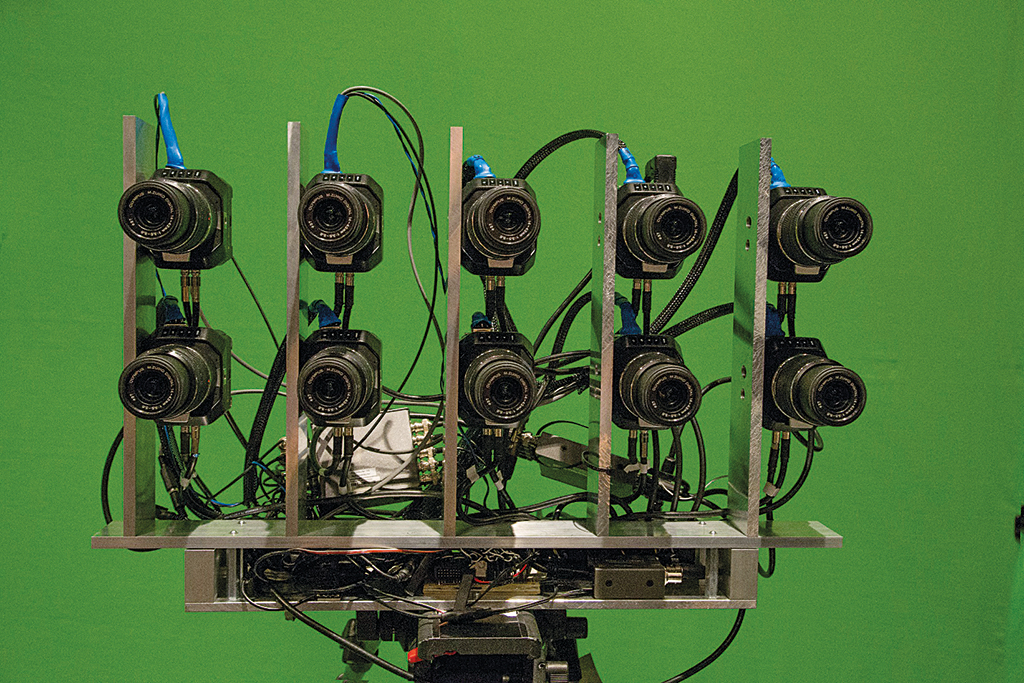

Dr. Joachim Keinert, Head of Group Computational Imaging at the Fraunhofer Institute for Integrated Circuits in Germany, has been leading a team in the building of multi-camera arrays for post-production and virtual reality applications. Keinert sees light fields as a possible key contributor to the world of visual effects.

“By capturing multiple perspectives from a scene, light fields preserve the spatial structure of a scene,” he says. “Light fields allow us to generate the correct perspective of a scene for any arbitrary virtual camera position. Compared to other technologies for 3D reconstruction, light fields excel by their large number of perspectives and hence by their capability to handle occlusions. Occlusions occur when one object is hiding another one while trying to compute a virtual camera position. This is a challenging problem that can be particularly well handled with light fields.

“Moreover,” continues Keinert, “they can preserve view-dependent appearances, as for instance occurring for specular objects. If your eyes fix a certain point of such a specular object while moving your head, you will observe that the color and the luminance of this point changes. Since light fields capture a scene from many perspectives, they can record such effects. Finally, you can compute very good depth maps because of the large number of captured perspectives.”

Keinert’s team also sees light fields as a promising way for bringing and adding photorealism to VR and AR applications. “Light fields can provide the correct scene perspectives without recurring to any meshing,” notes Keinert. “By these means, they are particularly well suited for live or real-time workflows and applications where reality should be reproduced as faithfully as possible.”

“The tools for the generation of depth maps evolve very fast. Light fields make the task simpler, compared to, for example, the portrait mode of modern smart phones, and offer a significant number of rays to choose from. Hence, even objects partially occluded can be made visible, or look through, hazy or turbid foreground.”

—Professor Thorsten Herfet, Saarland University

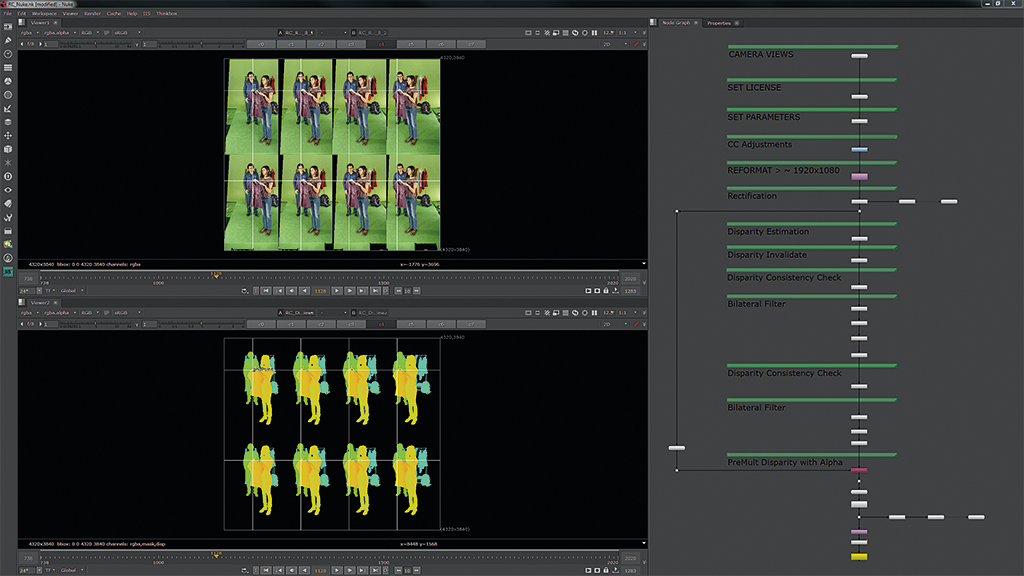

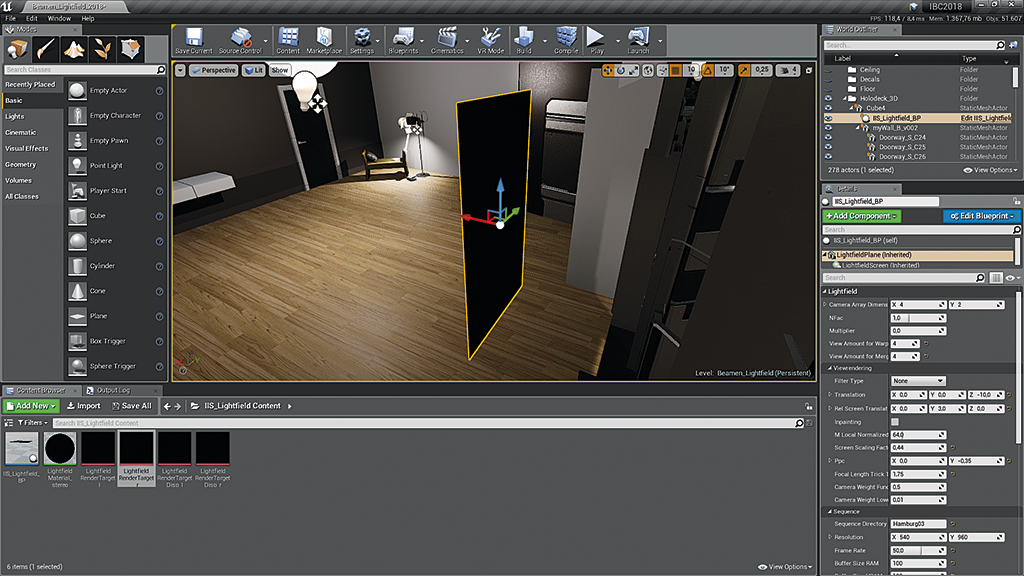

A Fraunhofer IIS-developed plugin called Realception allows for playback of light-field-captured data. Realception for Nuke provides editing and processing features to handle light field data sets, while Realception for Unreal provides a shader that allows embedding the processed light field data set into a CG environment. “Both plugins are heavily used in our internal research,” says Keinert, “because they provide the flexibility to adapt the algorithm parameters to the captured footage.”

Keinert believes the larger the camera array, the more useful light fields can be to the visual effects industry. “This however requires massive capture systems, huge data volumes and long computation times, he says. “To solve these challenges, we need to come up with algorithms that can reconstruct the light fields from a sparse sampling with highest possible quality. The challenge is to make them perfectly and smoothly fit into today’s available film and virtual reality workflows.”

Meanwhile, at Saarland University in Germany, Professor Thorsten Herfet and his team have been part of the EU research and innovation project called SAUCE, which is aimed at re-using digital assets in production. As part of that project, a trailer about a musician, called Unfolding, was filmed using a bespoke light field camera in multiple configurations to test and demonstrate the research.

Out of that trailer, Herfet noted the group had come to several findings about the use of light fields in filmmaking. These include, he says, the ability to achieve “flexible depth of field beyond the physics of a single lens. This as well refers to free positioning and partially free of the focal plane as to apertures not practically possible with a single lens.”

Herfet adds that depth-based image rendering is something that also looks promising. “The tools for the generation of depth maps evolve very fast. Light fields make the task simpler, compared to, for example, the portrait mode of modern smart phones, and offer a significant number of rays to choose from. Hence, even objects partially occluded can be made visible, or look through, hazy or turbid foreground.”

Still, right now the light field camera developed for Unfolding requires some time to set up and calibrate. Herfet would like aspects such as focus and aperture to be controlled electronically and jointly for all cameras, for example. “I would tend to say that – comparable to current technology – the array itself could be fixed and solidly mounted, so that different variants can be used for different shooting and purposes. The back end, servers and storage, can then be connected to each of those rig heads, so that consistent usage of monitoring, processing and storage is ensured.”

Several companies have engaged in light field research, directly and indirectly in relation to visual effects. Among those is Light Field Lab, Inc., which is aiming to use light fields in the generation of holographic displays, including by looking for new ways to handle the vast amounts of data produced.

“The physics involved in holographic display and data transmission are extremely complex,” notes Light Field Lab CEO and co-founder Jon Karafin. “Historically, the large data footprint required for rasterized light field images has been a significant barrier to entry for many emerging technologies. At Light Field Lab, we are developing real-time holographic rendering technologies to enable the future distribution of light field content to every device over commercial networks.

“In support of this vision,” adds Karafin, “we have co-founded the standards body IDEA (Immersive Digital Experiences Alliance) alongside CableLabs, Charter Communications, OTOY, Visby and a growing list of other industry experts, with the goal of providing a royalty-free immersive data format for efficient streaming of holographic content across next-generation networks.”

“Today, there are already dozens of studios creating light field and volumetric assets for virtual as well as live-action scene reconstruction. Additionally, recent productions including First Man leveraged 2D LED video wall displays on set to directly render virtual backgrounds for in-camera live-action capture without a greenscreen. In the future, we believe there is great potential to incorporate holographic video walls in production to directly project real environments that are indistinguishable from a physical location.”

—Jon Karafin, CEO and Co-founder, Light Field Lab

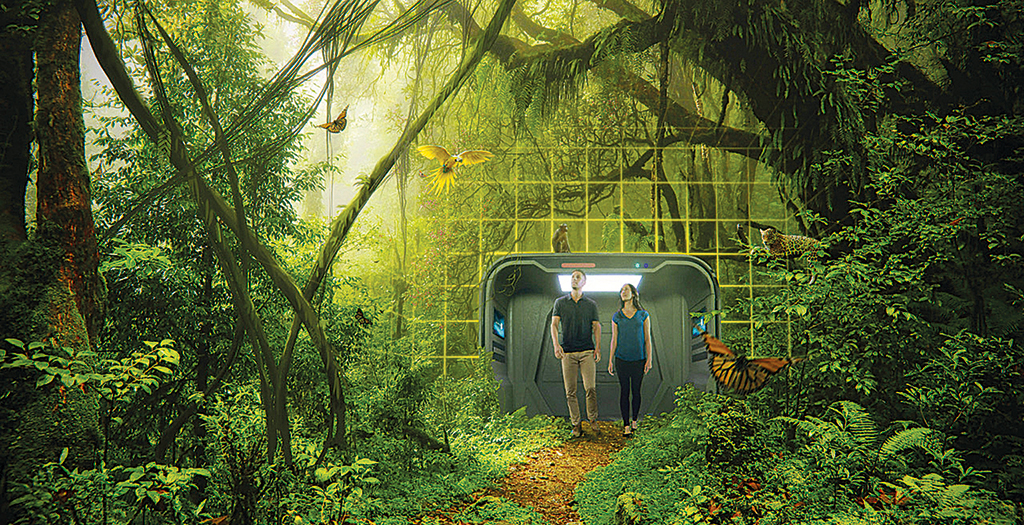

Karafin is confident that light fields will be an industry standard for future productions. “Today, there are already dozens of studios creating light field and volumetric assets for virtual as well as live-action scene reconstruction. Additionally, recent productions including First Man leveraged 2D LED video wall displays on set to directly render virtual backgrounds for in-camera live-action capture without a greenscreen. In the future, we believe there is great potential to incorporate holographic video walls in production to directly project real environments that are indistinguishable from a physical location.”

Paul Debevec, VES, is very familiar to many in visual effects based on his research into high dynamic range imagery, image-based lighting and the Light Stages. Now, as Senior Staff Engineer at GoogleVR, Debevec has been responsible for helping to research light fields and their application to virtual reality.

That culminated in the VR experience “Welcome to Light Fields,” available as an app on Steam VR for HTC Vive, Oculus Rift and Windows Mixed Reality. The idea of the piece was to enable a viewer to experience several panoramic light field still photographs and environments. In a presentation at SIGGRAPH 2018 in Vancouver called “The Making of Welcome to Light Fields VR,” Google team members noted that most VR experiences “offer at most omnidirectional stereo rendering, allowing a user to see stereo in all directions (though not when looking up and down, and only with the head held level) but not to move around in the scene. To address this, we developed an inside-out spherical light field capture system designed to be relatively easy to use and efficient to operate.”

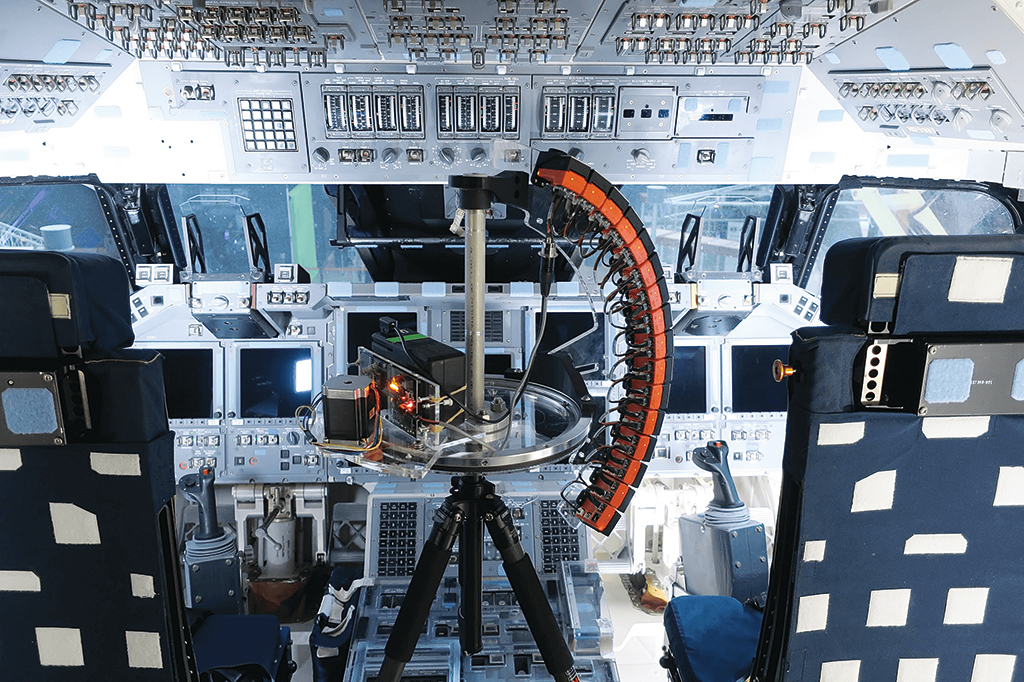

One of Google’s light field experiences in “Welcome to Light Fields” involved the flight deck of the Space Shuttle Discovery, captured with a specialized camera rig made up of a modified GoPro Odyssey Jump camera to have 16 cameras on a spinning arm. The explorable volume of space in the experience was, for the user, one in which they could move with a full six degrees of freedom (DOF). “Welcome to Light Fields” was also a showcase for efficient rendering of real-time light field VR experiences. The team has since worked on an improved capture rig, fully synthetic scenes with light fields and light field video.

“Even with synthetic imaging now, blending those pixels against the pixels behind is a very complex anti-aliasing problem. And the answer, up to this point, is to use higher resolution! Which of course is more computation. But what’s going on in terms of extracting that information from photographic extractions of light fields, that remains an unsolved problem.”

—John Berton Jr., Visual Effects Supervisor

There are other companies working in the light field space, too, such as Creal3D and reportedly Magic Leap, that are hoping light fields will aid in more mixed reality experiences, possibly to the point that the technology is reduced to wearable glasses rather than goggles. Indeed, much of the light fields research seems to be applicable more to immersive experiences, although a few short years ago it was touted as a technology that, thanks to depth compositing, may make greenscreens obsolete.

But that future has not really hit, so far. Experienced Visual Effects Supervisor John Berton Jr., who worked at Lytro for a period before the light field company shut down operations, says this was an area he and his colleagues had been looking at the company in relation to depth compositing.

“One of the biggest problems with creating light fields, if you really want good depth information for every pixel, you have to have more than one layer of depth,” observes Berton. “This is the classic problem for so-called depth compositing versus deep compositing. The first layer of information from a light field that you’re going to be able to get from photography is more akin to depth where you have a single value of z-depth per pixel.

“One of the problems that we were trying to solve at Lytro was that we can’t do anti-aliasing because we don’t know what the pixel behind it is. So we started developing ways of figuring out how can we stack into each pixel – like true deep compositing – layers of pixel depth values. You can do it if you have a synthetic image, but if you’re working with live-action photography, which is what we were trying to do, then it’s a whole different problem. But even with synthetic imaging now, blending those pixels against the pixels behind is a very complex anti-aliasing problem. And the answer, up to this point, is to use higher resolution! Which of course is more computation. But what’s going on in terms of extracting that information from photographic extractions of light fields, that remains an unsolved problem.”

Berton says more research in machine learning or artificial intelligence relating to computational photography and light fields may well help with extracting the right kind of depth information.

“Finding the edges, that’s the hard part. And making those edges extremely fine is also difficult because there’s just too much noise in the picture. However, if you have someone who can go in and show you where that line is supposed to be and you get enough information of that nature, then you can use it to train a network to assist the computation of photography with finding that edge. It’s a really, really interesting prospect.”