By CHRIS McGOWAN

By CHRIS McGOWAN

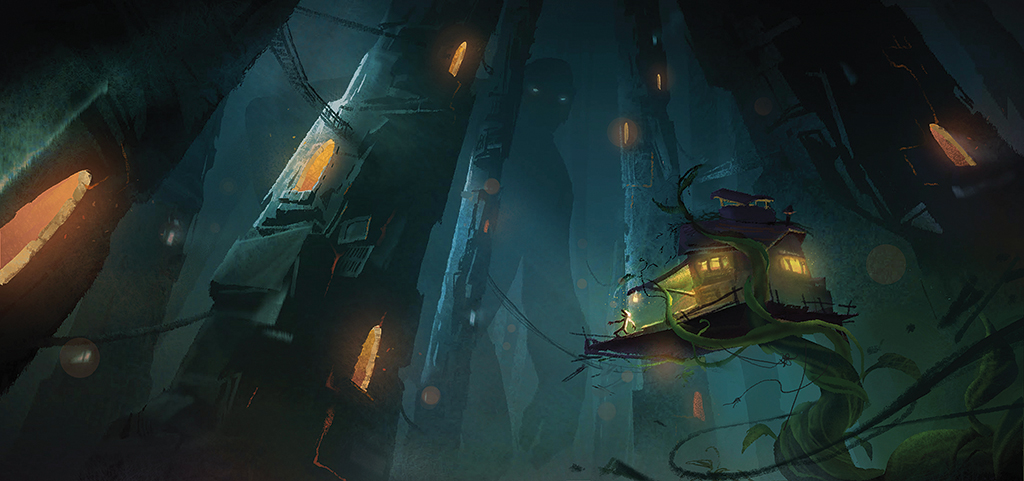

In Baobab Studios’ VR theater experience Jack: Part One, you don a headset and step into the shoes of the titular possessor of the magic beans. A physical stage transforms into a fantastical world above which looms a formidable giantess (voiced by Lupita Nyong’o). You roam about, grasp objects, and talk to a character played by an actor. You are part of the story. You bring the beans back home to your family, and your mother becomes furious and tosses them out a window. At night, a giant beanstalk tears the roof off the house, and you see a night sky and feel a cool breeze. You fly above the clouds on the wooden floor and enter the realm of the giants – you must evade them and bring back a certain magical golden harp. With tracking markers, motion capture and other modern tricks, the 1734 English fairy tale Jack and the Beanstalk has sprouted into something unprecedented. So too has virtual reality.

Just as VR has added immersive qualities to both films and video games, it’s also doing so for theater in various interactive experiences. Baobab’s Jack: Part One, DVgroup’s Alice: The Virtual Reality Play, Adventure Lab’s Dr. Crumb’s School for Disobedient Pets and Double Eye Studios’ Finding Pandora X are expanding the boundaries of storytelling by combining virtual reality with immersive theater and elements of escape rooms and video games. All the above experiences include live performances by real actors. “It feels like a mix of some things that we know and love and then there’s something else that feels more immersive. It’s like being inside the play,” says Kiira Benzing, Executive Creative Director for Double Eye Studios, about Finding Pandora X.

“It takes a lot of tech to make you forget there’s any! … Real-time engines are now at the core of all my creative process, with the companionship of a galaxy of handmade tools and custom software to merge the magic together.”

—Mathias Chelebourg, Director, Jack: Part One

There is much more interest now in immersive experiences, according to Adventure Lab COO and Co-founder Kimberly Adams. “The more immersive and interactive the better,” she states. “Before the pandemic, we saw a huge rise in escape rooms, immersive theaters and location-based VR companies like The Void, Sandbox and Dreamscape. Millennials and Gen Z were also hanging out digitally on platforms like Fortnite, Minecraft and Roblox. Now, digital immersive has gained a lot of ground. During the pandemic, there was a massive and almost instantaneous shift in cultural behavior – we all learned how to live, work and play on digital platforms like Zoom. Next Gen has a growing desire to interact with their entertainment and have it interact back. That’s why we are seeing platforms like Twitch, Discord and Clubhouse take off – because of that feeling of intimacy and interactivity. We see a huge opportunity for a digital entertainment platform that creates connection through social play, performance and magical technology.”

One path for live VR theater is to go fully remote – both you and the actor(s) can log in from anywhere, but you have to have to register for a show with a set time. Another path is for the experience to be a type of location-based VR with mapped physical sets that enable the audience to move about a stage and interact with an actor(s) and physical objects. In some cases, sensory stimuli further enhance the reality of the experience.

Mathias Chelebourg, who directed Jack: Part One, has so far taken the second path for VR theater. “If, like me, you think of VR as a parallel world where your body is fully [immersed] in the narration, then you’ll look for every trick available to address the senses. To date, fixed, crafted set design with embedded haptic gears, where you can simulate smell, touch and even taste, is definitely the optimal approach because it’s the most organic way

to make you feel the environment. Physicality in the context of live performance is to me the most efficient way to trick the viewer into believing he belongs to the fictional VR world.” Baobab Studios, an interactive animation studio that has won six Emmys, produced Jack. Mathias Chelebourg also directed Alice: The Virtual Reality Play (co-created by Marie Jourdren) and the VR experiences Dr. Who: The Runaway (from the BBC) and most recently Baba Yaga, also from Baobab. Chelebourg is based in Paris where he has his studio, Atelier Daruma.

Both Jack and Alice engage the senses. In Alice, participants are even handed a meringue to eat. “Immersion is a tricky equilibrium. I think that’s the main difference between directing for traditional shows and directing for immersive content,” says Chelebourg. In VR one needs to take extra care about the amount of sensorial stimuli you give the viewer at any given time. “Too much haptic feedback and you drive them away from the story, not enough and the magic will start to fade. But let us be honest, the most immersive part of Alice is the twisted play with the live performer. This connection is worth all the tech you can think of.”

Yet, tech is fundamental. “It takes a lot of tech to make you forget there’s any!” says Chelebourg. He used Unreal Engine and Unity “extensively” to support his shows. “Real-time engines are now at the core of all my creative process, with the companionship of a galaxy of handmade tools and custom software to merge the magic together.”

One example of practical tech in Jack was the set, which is “pretty massive. A fully movable pneumatic plate was built to create the ground rumble. I think the most immersive aspect of it was how far we pushed wireless motion capture to not only track the actors and the viewers, but also every single prop and set element that you can then lift, move around and play with, in the context of the narration.

We also designed custom smells with a nose expert, heat and cold cannons, huge fans for wind blowing, etc.” Up until today, he comments, “Jack really is one of the most complete and insane multi-sensorial VR experiments [ever] attempted.”

Chelebourg thinks there is also a place for live VR theater where audiences participate remotely and do not share the same physical space as the play’s actors. “It’s here to stay! And it has proven powerful, especially [last] year when we spent half our time interacting remotely inside VR worlds for festivals, events and performances. We also held Baba Yaga’s premiere in VRChat [the social VR platform] with cast and press. I’m curious about it, I love to experiment with it [live remote VR theater].”

Live VR theater can be experienced at home or it can be on a set. Either way, at the moment it requires a great deal of flexibility on the part of the cast. Actors played multiple roles in both Jack and Alice. “It’s the beauty of live mocap performance,” says Chelebourg. “It’s at the same time part puppeteering, part traditional presence on stage. In Alice the actor plays three different roles, and in Jack the actress embodies two drastically different characters. I was very meticulous in casting performers with voice-shifting talents and mime-playing notions because to me that was key to make it work.”

Improvisation is a key element in this new field of live VR theater. In Jack, a character would engage the audience in conversation. “What surprised me was the sheer variety of reactions!” Chelebourg comments. “Jack was, like Alice, based on a pretty linear backbone script, and I am very careful how much room I give to improvisation.

I want the viewers to feel free while they are following the invisible path designed for the story. But even with all the control you think you have, so many beautiful things happen, I had viewers dancing, singing, battling over Shakespeare poems with actors. And in the end the only conclusion I could draw is that it is complete madness to think you can anticipate all the possible behaviors in such a narrative device!”

He adds improvisation “is not necessary, but it’s a powerful tool to tailor the experience for each viewer. I love to write around this constraint and work with actors on their side script and characters so they’re geared up to face most situations in there. The way I usually work is by starting to write a strong linear script the way I would for a traditional play. I then identify the best [moments] for controlled improvisation to happen. Then life finds a way!”

Adventure Lab’s Dr. Crumb’s School for Disobedient Pets, with its mixture of immersive theater and other elements, also includes a good amount of improvisation and is built around “bringing the escape room experience into your living room” with the help of VR and elements of role-playing games. It is experienced at home and features a live actor. Adventure Lab CEO and Co-founder Maxwell Planck adds, “Currently, our performers spin up their shows from the cloud at showtime and host their guests in VR from their homes. Guests can be anywhere in the world. We have run shows for over 1,000 people in 18 different countries, and we can run many, many shows simultaneously.” So far, the remote approach with actors makes larger audiences possible, as compared to fixed-location VR theaters with actors.

Dr. Crumb’s requires signing up for a showtime. Notes Planck, who worked for 10 years as a technical director at Pixar and was a co-founder of Oculus Story Studio, “When you launch our app and enter the world of Dr. Crumb’s School for Disobedient Pets, you are greeted by an in-game character performed by a real person. Their job is to be your host, your guide, your ally and your nemesis. You can see how we’re combining all of the elements of an escape room clue master, D&D dungeon master and improv performance. In the end, it’s not about whether the players or the host wins. The host is incentivized to just make sure everyone has a blast.”

Adds Adams, previously a VFX producer and a producer at Pixar Animation Studios prior to Adventure Lab, “After a lot of trial and error, we landed on a good balance of scripted and improv interactions with the performer; enough narrative structure so folks know what the world is, who they are in relation to it and what is expected of them, and enough improv to make it feel special and unique. Ultimately, people really want to feel seen and heard.”

When the performer has too much dialog and explains something for too long without any back-and-forth with the audience, the latter goes quiet. “Growing up,” Adams explains, “we learn that when an actor is performing, we are expected to sit back and be quiet in order to be a good audience member. That’s the opposite of what we want for them in our experience! They need to feel safe, comfortable and be good verbal communicators as they interact with the performer and their team. If we have done our job right, within five to 10 minutes they should feel confident and like they have a license to play.”

“It’s getting easier and easier for VR developers to make content,” Planck says. “We use Unity, Maya, Blender and Substance. For our cloud infrastructure, the space where the master client is running, we’re leveraging several AWS services including S3, EC2, SQS, Lambdas, Gateway API. For our web app tools that allow our hosts to schedule and operate their own shows, we’re using AWS’s rich amplify tools, building on ReactJS, DynamoDB, Cognito and GraphQL.

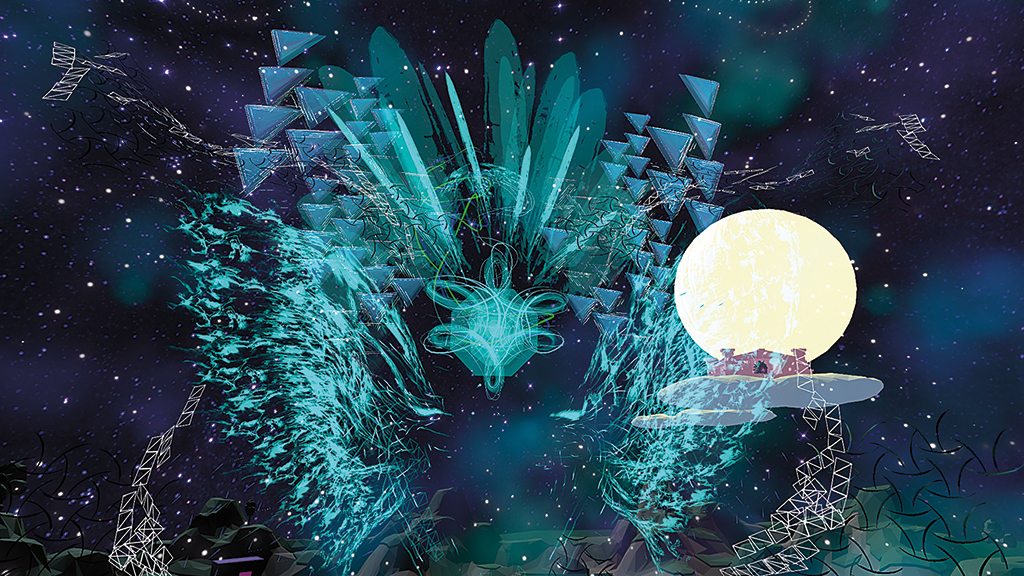

For Finding Pandora X, “Unity is definitely the backbone. Unity, VRChat, and originally we also worked with this platform LiveLab for the live-streaming aspect,” says Double Eye Studios’ Kiira Benzing. The immersive live VR theater experience Finding Pandora X puts the audience in the role of the Greek Chorus, and they interact with real actors as all seek to find Pandora and her box of hope. Finding Pandora X won the award for Best VR Immersive User Experience at the 2020 Venice International Film Festival.

Hewlett Packard helped the Finding Pandora X production with VR headsets and workstations, technical and financial support, according to Joanna Popper, HP Global Head of Virtual Reality for Location Based Entertainment, who served as executive producer. She notes, “HP is proud to be working with Kiira Benzing and Double Eye Studios on Finding Pandora X. Their award-winning, groundbreaking storytelling has proven to captivate and connect global audiences.”

Benzing emphasizes that the Pandora VR theatrical experience is quite different from traditional theater. “When I am rehearsing my actors in their avatars, and I am in an avatar, there are elements that feel the same [as traditional theater], and then there are elements that feel utterly new, such as flying to our ‘places’ at the top of the show.” In Finding Pandora X, one moves throughout the production, teleporting through a vast landscape as the story progresses. When the story branches, one chooses which quest one will embark on. Both quests have puzzles, but one quest is built on more logic-based puzzles and the other on more action-based puzzles.

Each player is considered “a member of the story,” explains Benzing. “In this production, the Greek Chorus reveals important information to the main characters. Another key theme in our work is the principle of collaboration. The members of our Greek Chorus have to “collaborate with each other to solve tricky situations during the quest part of the storyline. With that role comes responsibilities, from speaking at key moments, to solving puzzles, to aiding the main characters. It’s quite immersive but also different than immersive theater.” For example, one can defy the physical limitations of the physical reality, such as by flying. Another is you can interact with narrative objects.

Finding Pandora X has hosts in the “Cloud Lobby” to guide users after they arrive, Benzing explains. “Two of our characters, Hermes and Iris, are played by actors from our cast, and they interact with you to help you familiarize yourself with menus and controls. But they do this while improvising with you and peppering in commentary about the storyline and the main characters. It has been a delight to get our actors so technically savvy that they can help to onboard the audience and also give them story clues.”

“As we keep working on the narrative and structure of these shows,” observes Benzing, “I feel like it keeps revealing new things to me. We are taking risks in the narrative. I am sure some people don’t understand what we are doing. But by trying something new it also feels like we are getting somewhere – we are on our way to developing XR narrative and XR storytelling. I believe [the] unique blend of interactive, immersive theater and VR could be considered a new art form. It feels daring to say so, but it also feels regularly, mind-bendingly different.”