By MICHAEL GOLDMAN

By MICHAEL GOLDMAN

As the visual effects community ponders what’s next for the industry, an ironic dichotomy colors the discussion. On the one hand, we are in “a Golden Age of visual effects, and we should take time to recognize that and celebrate it,” suggests Jim Morris, VES, General Manager and President of Pixar Animation Studios, and a veteran VFX producer and Founding Chair of the Visual Effects Society.

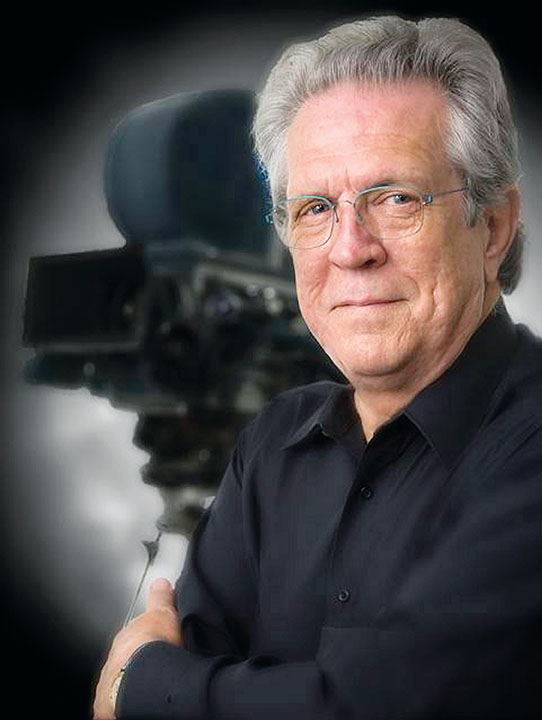

Morris, of course, is referring to revolutionary breakthroughs currently evident in a wide range of recent motion pictures and, increasingly, on television and in other media. Indeed, Visual Effects Supervisor Rob Legato, ASC, who shared last year’s visual effects Academy Award with Adam Valdez, Andrew R. Jones and Dan Lemmon for stunning work on The Jungle Book using sophisticated virtual production techniques, suggests those techniques are being advanced further at this very moment with work currently underway on 2019’s The Lion King and other projects. He reminds that, “On any given year, something like eight of the top 10 grossing movies are most likely to be visual effects-oriented films. That is a very healthy assemblage of films that greatly depend on the visual effects industry for the core of their existence.”

On the other hand, “the ability to do absolutely anything and the propensity for studios to spend tens of millions of dollars on visual effects for [tentpole] style comic-book movies has basically turned visual effects into a commodity,” says pioneering Visual Effects Supervisor Richard Edlund, VES, ASC.

“On any given day, every studio has at least a thousand shots in the loop.” Thus, there is concern about issues like saturation of the industry, a glut of artists competing for lower compensation, tightening timelines and shrinking budget demands that impact quality, and the potential devaluing of artistry and craftsmanship by studios.

For instance, “I’m constantly amazed by how much pressure we get from studios and clients to make repeated creative changes without additional pay, meet shorter post schedules, and to chase tax breaks around the world,” says ILM Visual Effects Supervisor Lindy DeQuattro. “If you were remodeling your kitchen and told your contractor you wanted granite, then saw the granite and said, ‘no, actually, I’d like to see some marble, or let’s try quartz, and then never mind, let’s put the granite back in,’ you would certainly expect to pay for all those materials and the additional labor.”

Contextualizing the great changes in the visual effects industry just in the 20 years since the VES was founded – and in order to look ahead to what might be coming next – major technical, creative and business shifts are inexorably intertwined. The precise direction and impact of these shifts, however, cannot be fully prognosticated, and so Dr. Ed Catmull, VES, Pixar’s Co-founder and now President of Pixar and Walt Disney Animation Studios, suggests facilities need to both take bold risks with new technologies, and simultaneously plan for outcomes ranging from wild success to abysmal failure.

“One recurrent theme is that when a new technology arrives, it initially does not work very well, but may show potential,” Catmull explains. “Sometimes, you will try it, or even buy it, and you will find out it actually does not live up to the hype. And sometimes, having tried it early will give you a great heads-up on things. But if a new thing appears and does not work well initially, some people will therefore conclude that makes it a bad idea, but they might be premature in concluding that. You still have to evaluate something by what its potential might be and move forward. Related to that, most of these things will have a horizon of about four or five years of going right or going wrong. You don’t know, if something goes wrong with a [technology], if a software or computer vendor is going to correct it or be blind to it, or if something will go wrong with their company as a result. So you have to constantly pay atten- tion to the symptoms, and that should give you four or five years to prepare if something does go wrong.”

Clearly, virtual production techniques, historically powerful software tools and rendering systems, motion-capture and facial-capture breakthroughs, Cloud computing, the rise of sophis- ticated previs techniques, deep machine-learning/AI tools, and so much more are on the forefront of the industry’s forward-looking conversation. But, at the same time, so is the restructuring of the industry landscape – how facilities are built, managed, and what their infrastructures and missions should look like.

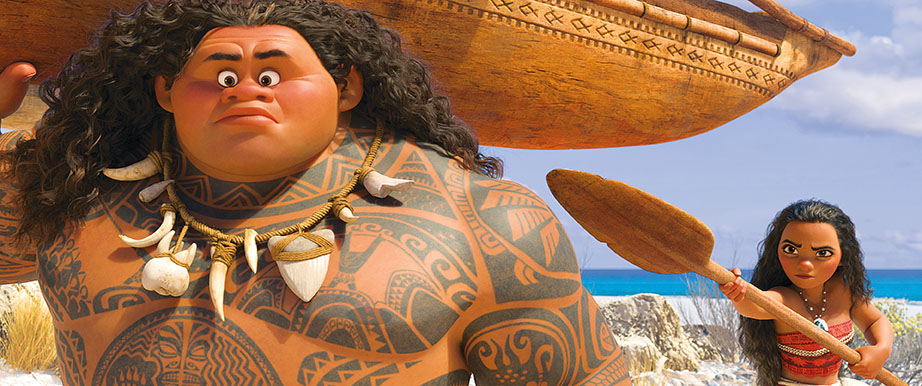

Industry veterans remind us that major companies have closed during this revolutionary period, along with other forms of consolidation and automation, while others evolved successfully. Sony Pictures Imageworks, for example, remains a key player in producing not only high-end CG feature films, but also cutting-edge VFX for major live-action films. To get this done, Imageworks “migrated our headquarters in recent years, and the majority of our workforce, to Vancouver,” explains Randy Lake, President of Sony Studio Operations and Sony Imageworks. “This has been successful in sustaining the quality of our work, while leveraging tax incentives to keep our costs competitive and innovating in technologies that enable our artists to create fantastic imagery.”

VFX Voice recently conducted a wide-ranging conversation with several leading industry professionals to get a sense of the industry’s future direction, and several key themes emerged.

VIRTUAL PRODUCTION TECHNIQUES

Legato argues that the combination of the latest digital film- making tools and software with VR-related previsualization technology and game rendering engines, along with lots of innovative thinking, can now help filmmakers achieve “a movie rooted totally in reality, even though it was artificially created – we are going through great pains to make sure the artificial part is removed from the audience purview. Animals and environments can look absolutely realistic, and I was also proud how, in The Jungle Book, we had 150 digital double shots where I doubt the audience, or even most professionals, could detect the use of a digital human within the illusion.

“So that, to me,” he continues, “is the future of using this technology for filmmaking –not to do a special effects genre movie only, but to make regular films that you can increase in scope or size, meaning bigger themed films, because we can put actors believably into [situations] where we couldn’t before. This trend can apply to any real story about real people. The computer power is outrageous, the software is incredible, and we have new tools that allow us to previsualize much more efficiently. On my next film, we are now extending the virtual reality ability to preview the entire film using VR tools. You put on VR goggles and walk into fully realized, three-dimensional sets, move your head around and see things react as they would in the real environment. All of a sudden, it becomes perfectly natural to invent shots and camera moves, and decide where characters or buildings go. Once [the technique] is figured out, the wheel is created. Now, other people can pick up on it for all sorts of applications.”

Industry veteran Tim Sarnoff, Deputy CEO and President of Production Services for Technicolor’s portfolio of visual effects companies (MPC, The Mill, Mikros Image, Mr. X and Technicolor), suggests the rapid adaptation of these tools in such a way as to fit into the needs of a filmmaking workflow has been almost as stunning as the tools themselves.

“While the fundamentals of artistry certainly have not changed, technologically, things have advanced tremendously,” Sarnoff says. “A decade ago, you could not have created The Jungle Book. That project applied dozens of new innovative technologies, including Cloud [rendering] technology, which was used to handle the hundreds of thousands of processing hours needed to render an entire rain forest in photographic detail. That’s an example of the convergence of immersive technologies with today’s established technologies, leaving an indelible mark on how this industry is evolving.”

The Mill, Sarnoff says, recently focused on bringing these two worlds together for the production of commercials and feature films.

“The Mill developed Blackbird, a virtual car rig that uses VR and AR [augmented reality] technology to digitally re-create any vehicle that needs to be featured for an advertisement,” he relates. “It also interacts dynamically with its environment. That means that when the photorealistic representation of an actual car is broadcast on your screen, it casts natural shadows and reflects light as it twists and turns around corners and climbs hills during a shoot. They have combined that with another just-announced technology called Cyclops, which is a solution that applies AR and game-engine technology to render on-set action for filmmakers in real time. Directors do not have to wait to see what the shoot looks like at a dailies session or in post-production. This will create immense opportunities for talent in the VFX community.”

Imageworks’ Randy Lake says the synergy percolating between the emerging virtual reality realm and the traditional visual effects business has caused his company “to become involved in initiatives developed within Sony Pictures Entertainment to explore and develop techniques that bridge the workflow used in our feature film projects into the VR space. We see the opportunity for a bi-directional exchange of technology and workflow as the VR medium and business model evolves. As an example, this can take the form of creative visualization technology within our traditional film pipelines, or R&D from the VFX space helping to improve the fidelity of virtual worlds. In addition, we believe (AR) will play a big role in the filmmaking process. We can build real-time visualization tools to make better decisions on set.”

IMMERSIVE CONTENT

Lake’s point illustrates why the arrival of virtual reality tools and concepts have opened the door to an entirely new form of immersive media content that, among other things, by its nature, will rely heavily on visual effects artists, tools and techniques. Indeed, at major facilities, there is currently a heavy “cross-pollination of techniques and capabilities and talent that flows between feature films, broadcasting, advertising, games, and now into the immersive arena,” explains Technicolor’s Sarnoff.

Studios and artists are already hard at work exploring this “immersive arena,” causing new creative laboratories to spring out of major facilities to research techniques, tools and concepts along the way. ILM has launched a new division called ILMxLAB to spearhead such work and, likewise, Technicolor has opened a new facility called the Technicolor Experience Center (TEC), while Framestore has launched Framestore VR Studio, just to name a few.

In the short term, some facilities are producing VR experiences designed to promote major feature films. Damien Fangou, CTO at MPC Film, a Technicolor company, for instance, points out that MPC recently worked on the Passengers Awakening VR experience project for Sony, linked to the release of the feature film, Passengers; and also on the Alien: Covenant in Utero VR experience for Fox, tied to the Alien: Covenant release.

Beyond such applications, a major focus of such facilities is to figure out how the VFX industry can “take advantage of the unique opportunities that VR and AR offer in their various [platforms] – location-based, at-home, mobile, and so on,” suggests Mohen Leo, Director of Content and Platform Strategy for ILMxLAB. While the foundations of such facilities are clearly being built on top of infrastructures that visual effects facilities already have for feature film work, Leo suggests experimentation across the industry is focusing on various new forms of interactive entertainment.

“For many years, ILM has made increasing use of real-time computer graphics to support virtual production for films,” Leo explains. “Through on-set, real-time previsualization, real-time previewing of motion-capture re-targeted onto digital characters and, eventually, even scouting digital sets using VR headsets, we tried to let film directors feel like they can intuitively plan and shoot a movie in the digital worlds we create. With VR and AR devices suddenly becoming consumer products in recent years, we realized that if we could let directors step into our virtual worlds and interact with fantastic characters, the next logical step would be to let our audience do it, too. Every fan of Star Wars has wondered what it would be like to be in the Star Wars universe and meet its iconic characters. We wanted to make that possible, so in 2015 we started ILMxLAB with the specific goal of exploring opportunities in immersive storytelling. Since then we’ve released a variety of experiences and experiments, and are continuing to work on increasingly ambitious ideas.”

People in this sector “don’t think of these immersive story experiences as a new form of film, nor are they a new form of video game,” Leo adds. “AR, VR, and other emerging digital content platforms are new media that will define their own new language for storytelling and find their own places alongside film, TV, video games, and other traditional media. In creating these experiences, we draw on the talents of VFX artists, along with people from the games industry, sound effects, engineering, music, storytelling, cinematography, and other disciplines.”

Some of the resulting content will be radically different from what is on the market today, he suggests, but, simultaneously, the resulting tools for making VR and AR content “will flow back into filmmaking and television,” Leo adds. “These tools will probably make high-end, user-generated content increasingly streamlined, but linear films and video content are a mature medium with over a century of history. The tools may change, but the final content will likely still follow established rules. On the other hand, the rules for what makes a compelling VR or AR storytelling experience haven’t been written yet.”

Aron Hjartarson, Framestore’s Executive Creative Director, calls content coming out of such institutions, including Framestore VR Studio, “non-traditional work.”

“[In our Los Angeles office], when it comes to non-traditional work, we have been very busy working on anything from 360 video content to full-room scale experiences,” Hjartarson explains. “Distribution has been via several avenues – anything from YouTube to the App Store, and everything in between. One of the interesting things about these projects is that the clients are diverse and come from different channels than our traditional business. We are finding that our core competencies have a wider application, from development to storytelling.”

Hjartarson emphasizes these new avenues increase the need for VFX artists. “As far as how VR impacts the visual effects industry, there is greater demand for [visual effects artists] than before,” he says. “[For the VR Studio], the majority of our artists still come from our existing talent pool, which is deep and wide. But on the CG and compositing side, we have expanded the skill set of many of our artists to deal with the challenges involved, and even on the development side, we have taken some of our best developers from the traditional side, as well as added some new talent to complement them.

“Also, 360 video is very challenging technically and needs super- visors that have a deep understanding of camera systems, optics, and how to reverse-engineer them. This is leading to a lot of R&D work in computer vision/computational photography, which is useful in traditional work. Thinking back to traditional VFX projects I’ve done in the past, we have developed tools that would have made those shows a lot easier. I think computational photography will develop at a fast rate, providing viable solutions for volumetric capture, which has a potential to influence everything we do, VR and traditional.”

BEST TOOLS EVER

While working on Air Force One in 1997, Richard Edlund, VES, suddenly comprehended that digital techniques would eventually push past generations of practical effects work and mechanical engineering breakthroughs like motion control. Today, he views the work he and others did on Star Wars and what followed in the 1970s onward as “steppingstones” on the way to a revolution that continues to pick up steam.

“On Air Force One, we had a 22-foot wingspan 747 model [built in the model shop of Edlund’s former company, Boss Films],” Edlund recalls. “It was perfected – a magnificent model. But halfway through production, our guys created a digital version of the 747.

The CG one was more useful than the huge [practical] model – so realistic [that it was used for some close-up shots of the plane]. The huge model required a day to shoot the model and a day to shoot the matte pass – a complicated nine-wire system built just for that show and never used again. [In that era] we would invent ourselves out of mechanical corners that we constructed, but motion control and all those things were steppingstones toward what we have today with CG.”

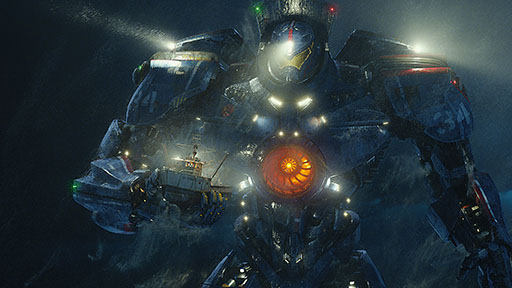

Radically improved simulation software tools have greatly accelerated this phenomenon beyond anything envisioned back then. ILM’s Lindy DeQuattro points out that “our water pipeline for The Perfect Storm [2000] was considered cutting edge at the time, but it was really just a cloth simulation for the water surface with particles generated based on motion vectors and surface normals to create splashes and sprays. These days, we have a true physics-based fluid simulator, which automatically generates accurate bubbles, splashes, mist, and so on, based on fluid motion. If you compare the water in The Perfect Storm to the work we more recently did on Pacific Rim (2013), it’s night and day. And we’ve had similar sweeping breakthroughs over the years in our creature pipeline, where we incorporated muscle and flesh simulation into the animation; in our rendering/shading pipeline, when we moved from a ray caster to a ray tracer; and in our lighting pipeline, when we switched to HDRI data and environment lighting.”

Those improvements, in turn, are powered by the incorporation of increasingly powerful game engines. Indeed, DeQuattro insists “the use of real-time tools will continue to be at the forefront of the industry.”

“They are the key to better on-set visualization, as well as rapid turnaround and more iterations in post work, which leads to higher quality and lower costs,” she adds. “Improving the tech- nology used in game engines to be able to produce film-quality VFX will be a huge area of development and will dramatically change the industry.”

According to MPC’s Damien Fangou, major advancements in The Jungle Book and other recent films were “all made possible around rendering technology. To be able to render such

a large photoreal world was not a small feat. It required a tremendous amount of computing power to deliver those shots. We were able to leverage both our large in-house render farm at MPC, but also our secure Cloud rendering workflow.”

Indeed, Cloud computing, industry professionals argue, is about to become a more routine and powerful weapon in the VFX artist’s arsenal. Fangou expects “the Cloud to trigger a huge transforma- tion in the VFX industry, as it will enable new workflows and allow for a greater complexity of work and photorealism in a shorter amount of time.” And it’s Sarnoff’s view that Cloud storage will become important to the economics of running a visual effects facility because, “Cloud storage is having an interesting impact on how (our industry) thinks about where data belongs and how we should pay for it.”

By that, he means, “it’s not just a question of managing storage costs. It’s more about shifting storage line items from an inflexible CAPEX [capital expenditure] center, avoiding the need to buy expensive IT equipment, to a more flexible and scalable OPEX [operational expenditure] center, where storage service openings increase and decrease dynamically, depending on how you use it.”

Fangou adds, “There are also big opportunities around [artificial intelligence] technology. I expect the visual effects industry will be seeing some big changes with the automation and machine intelligence that AI is bringing.” Catmull agrees. In particular, he says the Pixar, Disney Animation and ILM research units are all investigating deep-learning computer algorithms and the wider AI paradigm to see how systems might be able to make certain

visual effects processes more efficient. The problem, he suggests, is that while he is optimistic “deep learning is likely going to have an impact on our industry,” it still remains “such a broad concept, so it is not crystal clear what that impact is going to be yet, beyond the fact that the rate of growth and use of these chips puts us in a place to apply them in some unforeseen ways.”

Most industry representatives say, at the end of the day, most of these issues are about how the visual effects industry does things, and what tools and workflows it employs to do them. Tim Sarnoff suggests the verdict regarding these advancements, as always, will be rendered by the public.“The experience and the storytelling is what matters most when evaluating success in the creative technology arena, not the technical innovation itself,” Sarnoff emphasizes. “This has been the pattern in entertainment and communication throughout the ages, and I see no reason to suspect this pattern will not repeat itself again.”

“The industry has changed massively over the past 10 years, but despite this, I believe it has a strong future, especially following the rise of VR and AR content. We’ve seen a rise in demand for our software from an increasing number of small and medium-sized VFX houses, which is really helping to drive the industry forward.”

—Alex Mahon, CEO, Foundry

Manufacturer POV: VR/AR Expansion Drives Technology Demand

Since the tools visual effects’ artists use are central to the health and success of the industry, it’s important to remember that technology manufacturers are constantly evaluating the industry’s direction as they evolve their products. One industry standard compositing tool, for instance, is the Foundry’s Nuke software, now up to v. 11 as of this summer. The Foundry’s CEO, Alex Mahon, suggests technologies like Nuke, now more than ever, are being developed with new workflow methods, new markets and clients, and new image quality requirements in mind. In particular, she says, keeping the technology “highly scalable” is crucial.

“Nuke has always been designed from the ground up to be highly scalable,” she emphasizes. “HDR and color depth have been around for quite a while in feature films, and Nuke has always been able to effectively deal with both of those advancements. This is equally true for faster frame rates and higher resolution images. That being said, such processes do require more processing power and computer memory, which is where we see a huge opportunity for Cloud workflows.”

Cloud-based workflows, Mahon suggests, are likely to fundamentally change the visual effects’ industry, and so Foundry is pushing in that direction. The company, she says, recently launched a new Cloud-based post platform called Elara “which will play a huge part in streamlining the creation of VFX content. Opening up content creation to the Cloud will give an edge to independent professionals and small to medium-sized studios. By making infrastructure more scalable and cost-effective, we are making high-end visual effects far more accessible. This will undoubtedly change the way we produce content, whether it is for videogames, television, film, or any other medium.”

These developments, she suggests, are good news for facilities large and small that rely on Nuke and related products, particularly since the market and applications for the technology, and the services provided by visual effects’ artists, both appear to be expanding.

“From a business perspective, we have noticed how broad the demand for VR and AR content is, coming from industries that we might not have traditionally associated with this technology,” Mahon relates. “We’ve been seeing big leaps from the AEC space [architecture, engineering, construction], as well as in healthcare, education, and not-for-profit.”

“The industry has changed massively over the past 10 years, but despite this, I believe it has a strong future, especially following the rise of VR and AR content,” she adds. “We’ve seen a rise in demand for our software from an increasing number of small and medium-sized VFX houses, which is really helping to drive the industry forward. In fact, this trend led us to introduce a subscription-based pricing model for [Foundry’s modeling software] Modo, offering a more accessible and affordable way for smaller artists and studios to create exciting, cutting-edge content.”

“New techniques that were once considered bleeding-edge and experimental are now production-tested and readily available. It will be interesting to see where we are five years from now, but I expect we’ll be doing the same work in entirely new ways.”

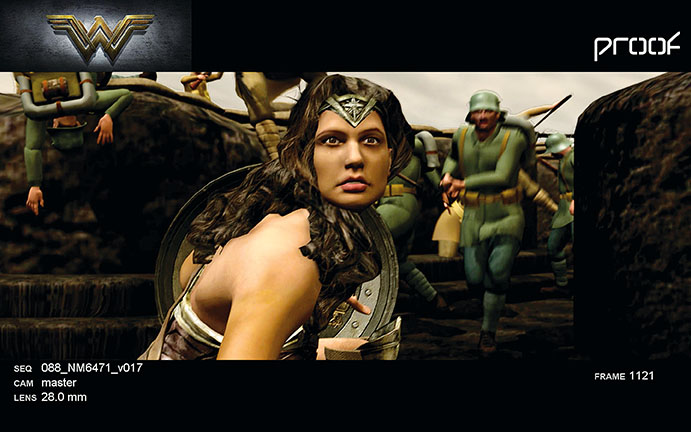

—Ron Frankel, Founder and Creative Director, Proof Inc.

Previs Helps Bring VR/AR to Front Lines

Previsualization has quickly grown from an option to a vital component of the filmmaking process. One of that sector’s veterans, Ron Frankel, Founder and Creative Director at Los Angeles-based previs studio Proof Inc., says the discipline has raced from a niche service used for particular sequences on a handful of films to something that “plays an integral role in every major motion picture made today, as well as many of the smaller films.” Now, Frankel says, the previsualization sector is rapidly revamping its tools and techniques along with the rest of the industry.

“Currently, we’re employing real-time game engines and augmented reality technologies primarily for our non-feature film projects, but we’re already seeing cases where those techniques can benefit feature film production,” he says. “The most prevalent is with on-set visualization, which is really just a variation of AR geared specifically for cameras using professional film lenses. We’re also seeing applications of VR for film production with virtual set walk-throughs, and using game engines for real-time shot design.”

Frankel suggests that taking advantage of game engines to render imagery as close as possible to real time is particularly important for previs, because “previs artists are in a unique and privileged position, sitting amongst the creative stake-holders for a project. We’re right there as decisions are being made, and we’re often instrumental in informing those decisions.”

Therefore, he adds, “we want to work at the speed of ideas, so that we can engage our clients in creative dialogue. Our current workflow allows us to make changes in a matter of seconds, or minutes. That’s fast, but it’s not real-time. We’re always looking for new tools or technologies that will give us the ability to work faster, and with more creative control. Game engines, headsets, and augmented reality are all technologies that promise that combination, so I expect to see more VR and AR incorporated into our visualization work in the near future. New techniques that were once considered bleeding-edge and experimental are now production-tested and readily available. It will be interesting to see where we are five years from now, but I expect we’ll be doing the same work in entirely new ways.”