By CHRIS McGOWAN

Images courtesy of Netflix.

By CHRIS McGOWAN

Images courtesy of Netflix.

In the post-apocalyptic Netflix adventure series Sweet Tooth, based on the Jeff Lemire comic book and developed by Jim Mickle, a viral pandemic called the Sick has wiped out most of the humans on Earth. At the same time a vast number of hybrid babies have begun to be born – part human and part animal – and they are blamed for the disease by groups like the Last Men, who actively hunt them down. In this charged atmosphere, Gus (Christian Convery), a human/deer hybrid boy, leaves a solitary life behind to embark on a perilous journey to find his mother. He is accompanied by Tommy Jepperd (Nonso Anozie), a formidable former Last Man who has changed his ways and now reluctantly protects Gus.

Zoic Studios was called in to help create Sweet Tooth’s dystopian world full of decaying urban areas, wild animals and hybrid creatures. It required a “huge variety of different effects,” according to On Set VFX Supervisor Matthew Bramante. “Not only was the shot count high, but the variety and scope were huge. Everything from photoreal animals with dynamic muscle, hair and fur systems, to complex set extensions, dynamic fireworks, and digital augmentation to a variety of characters and puppets to bring the hybrids to life, to name just a few. A show like this really takes a whole host of talented artists with a massive variety of skills, the whole physical production team along with the great team of artists and supervisors in post at Zoic.”

“As the team at Fractured FX created Bobby, they would send us the digital sculpts and scans along the way so that we could create our CG version to match. Building assets in tandem made the final result much more achievable, as many of the same underlying mechanics – rigging, muscle, skin, fur and clothing – all build up in a similar way, whether it be done practically or digitally. The digital Bobby needed to match one-to-one with the practical version because of how the two intercut in the same scenes. Our digital asset, lighting and compositing had to be very precise.”

—Rob Price, Visual Effects Supervisor

Bramante notes that the post shot count could have been much higher. “A handful of sequences could very well have gone the way of a more traditional blue/greenscreen approach, but instead we used LED walls to capture much of it in camera.” One example of this was a park visitor center in Episode 102, where Gus and Tommy Jepperd take shelter. “The main room of the visitor center had an array of windows and a balcony that overlooked distant mountains. The team at Zoic used a combination of Unreal Engine and traditional matte painting and comp techniques to create those views at different times of day in different lighting scenarios for the variety of scenes we had in front of those windows. The Jepperd fight scene on the balcony during the storm was a particularly effective use. There were quite a few goosebump moments on the day when Jep would hit a pose just as the lightning in the BG would strike.”

Visual Effects Supervisor Rob Price adds, “Utilizing newer techniques such as LED screens for some environments meant that we needed to be involved in production much earlier than traditional VFX post work – we are not typically producing final pixel imagery while we are in prep. Being able to collaborate on scenes like the Jeppard/Last Men fight during the lighting storm was an amazing new experience.”

In addition, the train shots in Episode 106 were perfect for LED walls, according to Bramante. “Nick Bassett, our Production Designer, and the art department team built a couple of boxcar sets, with open side doors, windows, vents, and doors between cars. Rather than using blue/greenscreen to comp moving BGs out there, we again went the LED route. For our BGs we took a drone unit down to the South Island of New Zealand to capture a variety of exterior plates that could cover any of the views outside the boxcars. Then, on the day, we’d line up the plate and tweak perspective and horizon to match the shot. It all worked to great effect.”

Sweet Tooth required the creation of several different time periods, events and locations. “Firstly, the immediate panic of the pandemic in the pilot required lots of in-progress devastation to add to the chaotic nature of the event,” comments Price. “This was mostly adding matte-painting set extensions and compositing elements of burning buildings, smoke/atmospherics, and additional CG crowds and vehicles that we animated, lit, and rendered out of Maya and composited in Nuke.”

“Not only was the shot count high, but the variety and scope were huge. Everything from photoreal animals with dynamic muscle, hair and fur systems, to complex set extensions, dynamic fireworks, and digital augmentation to a variety of characters and puppets to bring the hybrids to life…”

—Matthew Bramante, On Set VFX Supervisor

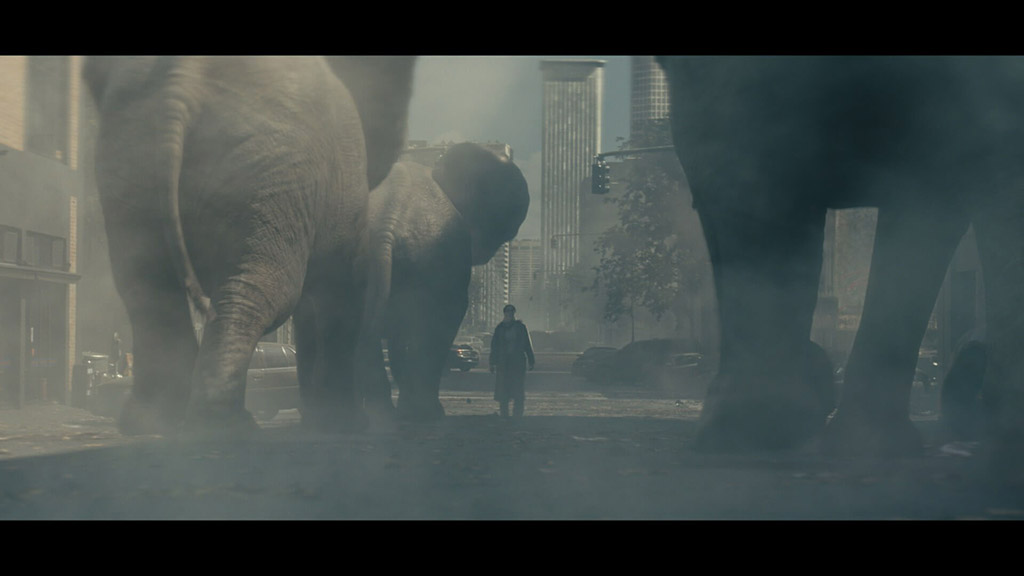

Once the world had settled, the team’s focus turned to the aftermath: adding what was left behind from the chaos, according to Price. “You can see this most clearly in the scene where Aimee encounters the herd of elephants. The city set extension is all about how we have just abandoned our world. We began with plate photography and Lidar scans of our Auckland city street location. Using Houdini, we procedurally build our cityscape, focusing on replicating the feel of new and old construction. Within the city we then scattered debris, cars, foliage, and simulated atmospheric fog. Doing this all in a single package allowed us to have more dynamic interaction with our CG elephants running through the scene.”

For the run-down cities, “it all started with great locations,” according to Bramante. He, Bassett and Episode 104 director Toa Fraser went on extensive scouts around the city. “Mostly we were looking for areas that already had a lot of foreground overgrowth. Again, we were looking to reinforce the concept that nature was recapturing the city. We found some great locations in downtown Auckland with buildings completely covered in vines. By staging our performers in front of those and dressing in some abandoned cars and FG plants, we could effectively focus the VFX work on the deep BG with matte paintings of collapsing buildings and large-scale plant growth. It was a quite effective use of VFX working hand-in-hand with the art department and locations.”

Price continues, “We developed tools inside of Houdini to better grow vegetation more naturally. Being able to procedurally grow vines, flowers and any plants we needed allowed us the creative flexibility to quickly iterate on environment work and add overgrowth, whether that was a city street, guard tower or train bridge.”

Along with the various buildings and streets being overtaken by plant life, there are large animals running wild – zebras, giraffes and a herd of African elephants. “We knew we needed some extensive planning,” Bramante explains. “This was my first time using Unreal Engine for previs, and I was impressed with its speed and quality. The team at Zoic was able to [put together] some complex sequences very quickly.” He explains, “By visualizing the scene early on we were able to plan a handful of physical objects that would interact with the digital elephants. I’ve always felt that with large CG moving objects and creatures it’s critical to ground them with some environment interactions. In this case the elephants bump into a car and knock a side mirror off; both were done practically in the plate. As with everything on the show, we Lidar-scanned the set to give the post team exact matches of the set and the dressing.”

Previs was also key for the preserve assault scene, Bramante notes. “The end of any season is always a mix of big set pieces and a tight schedule. Gabi [Mejia], the previs artist at Zoic, was able to build us a great looking previs in, I think, a week from kickoff to review. It was a huge undertaking and the scene turned out all the better for it. It’s critical to have a map for a complicated sequence like that.” To create the CG animals, Bramante explains, “We modeled, sculpted, textured and shaded inside of Maya, zBrush and Substance. We used Yeti for the fur grooming and simulations, along with Ziva for our dynamic physics-based character simulations, which was important for a high level of detail as many of our animals had to perform and hold up extremely close to camera.”

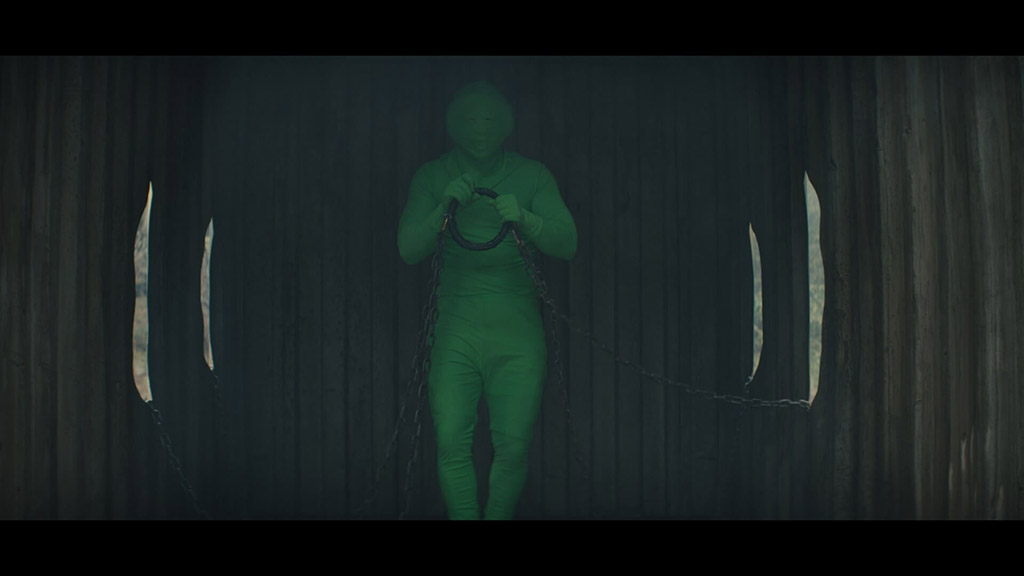

Price is also fond of Daisy, a Bengal tiger, saying, “We spent a lot of time developing the tiger asset, and I am extremely happy with how she turned out.” Bramante adds, “Daisy is confined to a metal shipping container, where the Animal Army [group] has chains to control her. Keeping the action contained allowed us a couple of benefits. We could hide her in shadows and use some shafts of the light to reveal her.”

The hybrids on the show come in forms that range from mostly human to mostly animal. Gus, for example is a boy with deer ears and small antlers, while Bobby resembles a bipedal groundhog. Some were clever makeup designed by Stef Knight and the makeup team, some were like Gus, whose ears had animatronic components, and one [Bobby] was entirely a puppet. Bramante explains, “Gus was an interesting case, his ears are a major part of his character and do a lot to convey his emotional state. Grant Lehman, the puppeteer for Gus’s ears, would operate them remotely during each take. In a few key situations, they were augmented in post to adjust timings or exaggerate the action, but for the most part this was practical.”

Fracture FX built two different puppets for Bobby. One was meant to be a more traditional rod puppet with more flexibility in the body, while the other was an animatronic puppet for face articulation. Bramante notes, “From a VFX perspective, we were mostly adding and augmenting, pushing the puppet to do things the animatronics couldn’t handle on their own. Adding things like eye blinks, warping the mouth to form complex phonemes, and adjusting the brow and cheeks to add extra levels of expression are some examples of that. In a few cases where the physical puppet wouldn’t be able to perform some of the actions, like walking across a room or diving into a hole in the ground, a CG version of Bobby was used.”

Price adds, “As the team at Fractured FX created Bobby, they would send us the digital sculpts and scans along the way so that we could create our CG version to match. Building assets in tandem made the final result much more achievable, as many of the same underlying mechanics – rigging, muscle, skin, fur and clothing – all build up in a similar way, whether it be done practically or digitally. The digital Bobby needed to match one-to-one with the practical version because of how the two intercut in the same scenes. Our digital asset, lighting and compositing had to be very precise.”

Another VFX challenge was the title shot of the first episode, which starts on a plate of Dr. Singh looking into the maternity ward. Price comments, “There is a hand-off from the practical camera to a digital camera so we can travel out through a sheet of glass, leave the room, and transition from 2D clouds on the walls to 3D clouds in the sky. There are then three different drone shots stitched together to create the somersault movement from the camera. We eventually stitched that into another crane shot down to Pubba [Will Forte] and Gus. This shot had some of the most complex re-times, plate stitches and 3D camera takeovers I have done, mostly because it looks deceptively simple as a final shot.”

“Utilizing newer techniques such as LED screens for some environments meant that we needed to be involved in production much earlier than traditional VFX post work – we are not typically producing final pixel imagery while we are in prep. Being able to collaborate on scenes like the Jeppard/Last Men fight during the lighting storm was an amazing new experience.”

—Rob Price, Visual Effects Supervisor

Bramante’s favorite shot came in the opening of the second episode, which tells the tale of Aimee Eden (Dania Ramirez), who goes into lockdown in her office for months when the outside world falls apart. “To tell that story, Jim Mickle, our director and showrunner, wanted to do a single time-lapse shot, starting on Aimee, then pulling back and moving through the room to show months of time passing. To realize this, Jim and Dave Garbett, our cinematographer on the episode, and I set to planning a complicated multi-pass motion control shot. Through the shot, we see Aimee at various points during her isolation. Starting with the camera move, we planned the day/night cycle timings, all the while knowing we wanted a sort of stop-motion feeling, so we planned hard cuts into the light timings. In the final shot, there is a constant sun rising and setting; this was achieved with a light on a jib arm moving through the shot to match the timings we planned. This was another place the previs Zoic did for us was critical in giving us a roadmap to get the shot.”

He continues, “After running a variety of passes with Aimee and a clean plate of the room, we set to shooting various passes with different levels of dressing. Showing how Aimee had survived through those many months, the room fills up with objects: stacks of books, cases of bottled water [later empty bottles], drawings on the wall, even potted plants at different levels of growth. By shooting these in separate motion control passes, we could selectively have the objects appear, move and disappear while the shot plays out, taking the room from a normal office to a lived-in quarantine living space. The final shot goes by quickly, less than two minutes, but it is a particularly cool effect.”

“This was my first time using Unreal Engine for previs, and I was impressed with its speed and quality. The team at Zoic was able to [put together] some complex sequences very quickly. By visualizing the scene early on we were able to plan a handful of physical objects that would interact with the digital elephants. I’ve always felt that with large CG moving objects and creatures it’s critical to ground them with some environment interactions. In this case, the elephants bump into a car and knock a side mirror off; both were done practically in the plate. As with everything on the show, we Lidar scanned the set to give the post team exact matches of the set and the dressing.”

—Matthew Bramante, On Set VFX Supervisor

Bramante comments, “All in all, it was a very collaborative experience, both among the various physical production departments and the post team as a whole.” Price adds, “We are extremely fortunate to have great on-set work across all departments. We had a lot of back and forth between departments to figure out what we could all bring to the table. For this story it was particularly important to find the threshold of where nature is taking back our world versus going too far where our world is completely gone. There is a balance there, and I think we all nailed it.”

Price continues, “Working with such a creative team was amazing. This production always had an answer – the design team worked very hard creating look books so we always knew where we needed to go creatively. Because we were so targeted in what we could do practically on set, we were able to really focus on adding, not fixing, when it came to VFX. There was always the discussion of what was best for the final image.”