By TREVOR HOGG

By TREVOR HOGG

Traditionally, movies and television shows have been divided into three stages consisting of pre-production, production and post-production; however, the lines are blurring with the advancements in virtual production. A great deal of interest was generated with what Jon Favreau was able to achieve utilizing the technology to produce The Mandalorian. Interest turned into necessity when the coronavirus pandemic restricted the ability to shoot global locations. If you cannot go out into the world then the next best thing is to create a photorealistic, computer-generated environment that can be adjusted in real-time. Will virtual production be a game-changer that will have lasting impact? Industry leaders weigh in.

Scott Chambliss, Production Designer, Guardians of the Galaxy, Vol. 2

“One of the best qualities of our medium is its essential plasticity. By replacing traditional bluescreen/greenscreen tech with LED display walls, a stage working environment is dramatically enhanced by its chief gifts of interactive practical lighting and directly representative motion picture backgrounds the screens display in-camera for shooting purposes. For sci-fi and fantasy projects these advances are major and practical additions. Now for the first time and as a matter of course, designers will be directly

participating in the final digital completion of their own work on a project. This alone is a significant and long overdue change.

“Virtual production tech isn’t appropriate or practical for every genre. If a project is set in the present day and requires direct interaction with a story’s environment as a Mission: Impossible or Bond movie would, the tech offers little benefit. Medium to small-budget projects will also have difficulty accessing the tech as it comes with a healthy price tag, both for the stage setup itself and the lead time required to create digital assets for playback. Neither is the tech a magic bullet that will remedy layers of problems we

face going back into production in the age of COVID. Nevertheless, The Mandalorian-style virtual production is a visual quality enhancing gift to top tier-budgeted projects that traditionally require a load of bluescreen/greenscreen stage environments. I don’t imagine many on a production team would be sad to lose those grating and problematic chromakey drops once and for all.”

Scott Meadows, Head of Visualization and Virtual Production, Digital Domain

“We recently had a client in the middle of reshoots when COVID hit, forcing us to rethink how best to proceed. We previously completed the previs for this sequence, so we already had a virtual environment that could easily be integrated into Unreal Engine. We had several props and CG characters, and our team put together some blocking animation that we added to Unreal Engine. Within a day, we had everything we needed for the filmmakers to do whatever they wanted within the scene. For the actual shoot

there were only seven people present, with the director, editor, VFX Supervisor and Animation Supervisor all calling in remotely. We broadcast the camera operator feeds to them, so they saw the virtual camera shots in real-time. Afterwards, we scheduled follow-up shoots with someone in a mocap suit to provide a more nuanced performance.

“Filmmakers love to iterate though, so we’ve modified our pipeline to allow them to do that throughout, and even after virtual production. We are going to spend more time in the game engines long-term. Right now, Digital Domain is one of only a few other studios that have both the pipeline and the staff to manage everything correctly [and efficiently], plus we also have the advantage of a full motion library created over time on our mocap stage. The goal with all of this is to design sequences that flow well and are

visually compelling. Real-time is great for this, and it gives you the feeling of being on set and/or finding a shot on the day. As virtual production becomes more accessible and in-demand, it won’t be treated as a separate part of the filmmaking process, but another creative tool we use to produce great work.”

Sam Nicholson, CEO and Founder, Stargate Studios

“Virtual production is the new Wild West of the film business where the world of game developers and film producers are merging. From photoreal avatars to flawless virtual sets and extensive Unreal worlds, the global production community has embraced the amazing potential of virtual production as a solution to many of the production challenges facing us during the current global pandemic.

“Throughout 2020, we also saw the emergence of a different type of democratization powered by real-time productions – the democratization of location.”

—Adam Myhill, Creative Director, Labs, Unity Technologies

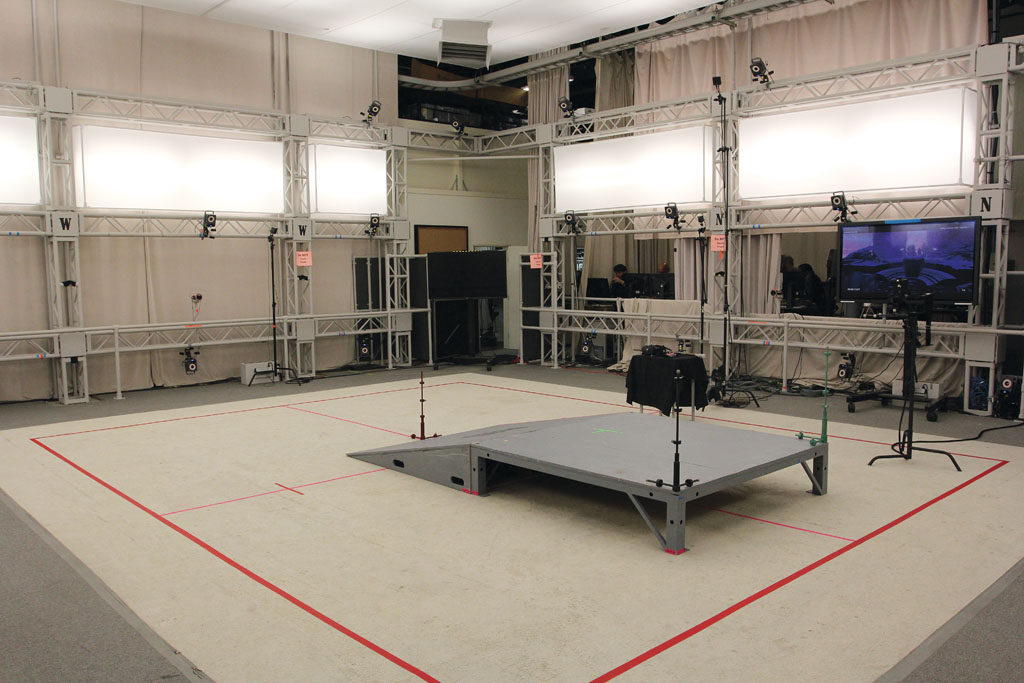

“The convergence of volumetric imaging, spatial tracking, scalable LED displays and extensive real-time visualization tools, such as Unreal Engine 5, are increasingly enabling a new ‘Virtual Era’ of production on set. Simultaneously, traditional visual effects post-production workflows are accelerating towards real-time with advances in computational processing, cloud rendering and integrated remote workflows which enable complex hybrid combinations of new entertainment formats. Physical reality, sampled reality and synthesized reality can now be seamlessly blended by creative artists and skilled technicians into multiple, complex entertainment experiences – increasingly produced and experienced in real-time.

“Individual, highly-specialized production teams will become increasingly decentralized, yet remain hyper-connected on digital platforms. These new strategic partnerships and virtual production communities will in turn share knowledge, integrated assets and unlimited horsepower enabling virtual productions to collaborate on a global scale unimaginable by today’s standards.”

Adam Myhill, Creative Director, Labs, Unity Technologies

“Now that the industry understands how real-time technologies can best benefit a production, the next step is making them accessible to every level of filmmaker. There’s no reason that a student making his first film shouldn’t be able to benefit from the same kinds of real-time production tools as Jon Favreau and Steven Spielberg. Throughout 2020, we also saw the emergence of a different type of democratization powered by real-time productions – the democratization of location.

“The pandemic we’re living through has had a massive impact on countless industries, and film was no exception. Not only were studios forced to pivot to new forms of distribution to reach their audiences, but the world of on-set production ground to an absolute halt. But for some studios, real-time production has helped members of their teams get back to work. In other cases, real-time production is helping to power the transition to animation to help keep content in production. Instead of the hours per frame that traditional methods of animation take, real-time rendering in an animation pipeline occurs at just that real-time. While real-time production wasn’t designed to help keep Hollywood studios working during a pandemic, it has definitely helped to take some of the pressure off of production that would otherwise be stranded.”

Paul Cameron, Cinematographer and Director, Westworld

“The volumes are great for The Mandalorian, landscapes and putting a massive cargo ship in space multiplied by 100 ships, but you can’t put sets on a 360-degree LED volume. We will be testing big LED volume-type applications for Westworld this year. Is it all based on COVID? No. It’s based on what we did last season and coming to the realization that we want to work in a combination of set and LED volume walls. Everybody is looking at people like us to say, ‘Shoot it all in a LED environment. You’re fine.’ But that’s not the case. The inherent problem is that we’re looking at an industry that relies on a lot of people going to different locations or stages or both. Now with COVID a big panic button got hit. How do you stay at home and do it? We’ve got to get back onto the plane with the same groups of people to produce the same imagery and content.

“I hope that virtual production and aspects of it remain similar to what they are now. What I don’t want to see are discussions of how to capture images with new LiDAR-type technology of lens-less cameras recording environments so that it could all be determined later on how the environments are going to be used; that has been a moving target in the virtual production world anyway. The important thing short-term is to simplify stories, cast the number of people you need and find the locations required to tell a story. Let’s just get the content going and once the machine is moving then determine how virtual production can be improved for production across the board, instead of making it the driving force.”

Alex McDowell, Co-Founder, Creative Director, Experimental Design

“Someone like my son can play [a video game] for 72 hours in the same environment and is in complete control of his character’s costume, behavior and ability to move; he is standing at the center of stage at all times. The essence of filmmaking is that the audience is passive and immersing themselves in somebody else’s world. I don’t think it’s a possibility of them becoming similar and nor would you want to. On the other hand, as a designer I think absolutely. Finally, the film industry understands that game engines

could be part of pre-production as well as post-production. The only design difference for video games is that you have to create infinitely large worlds for years of gameplay.

“When I worked at the beginning of Star Wars: Episode IX in London with the original director, Colin Trevorrow, ILM was part of the art department. Our virtual production process was rough-designing sets before illustration so you’re blocking environments relative to scale and geo location, pushing that wireframe into Maya and the game engine, putting VR goggles on the director, establishing keyframes from the director’s scout of the set, and feeding that information back to the artists in the art department

who create high-resolution images that are constantly pushed back into VR for location scouting. The end result is that you have accelerated the project by eight to 12 months by having the director live inside the environment from day one.”

David Morin, Head, Epic Games Los Angeles Lab

“The big thing that happened recently is the ability to do photoreal rendering in real-time; that has changed the art department for those who have chosen to embrace the technology. For the glass house scene in John Wick: Chapter 3 – Parabellum, the reflections were influencing the set design. The normal CAD package was imported into Unreal Engine, which has the ability to do ray tracing and produce accurate reflections. The camera department could see where the reflection of the camera was going to show in that virtual twin of the set and have the design modified. You can also use it later for re-shoots if the set has been dismantled. It brings filmmakers back to a workflow that has the immediacy of physical filmmaking.

“The benefit of putting Unreal on the LED wall is that the parallax of the world changes according to the position of the camera. It’s not going to look like a flat wall. That’s a game-changer in the way you can shoot something. The biggest opportunity for the visual effects industry is for it to move back from post-production into production and pre-production so there is this collaborative feedback loop. Between 40% and 50% of shots captured on set for The Mandalorian were final while the remainder were traditional visual effects. This won’t replace all of the other ways for doing things but adds a new way of working that brings a lot of benefits with it.”

“As virtual production becomes more accessible and in-demand, it won’t be treated as a separate part of the filmmaking process, but another creative tool we use to produce great work.”

—Scott Meadows, Head of Visualization and Virtual Production, Digital Domain

Christopher Nichols, Director, Chaos Group Labs

“It will be great when virtual production becomes production. There are a couple of things that need to happen. If what comes out of shooting virtual production looks close to what it is going to look like in the final frame then the director and DP will be able to see and change things as opposed to the old days when they were looking at rudimentary grey-shaded geometry. Now they can say, ‘I want a key light here.’ Those decisions are being made on set as opposed to passing it down to some visual effects artists to

make that decision later and guessing what the intent was – that is starting to occur now with some of the stuff happening with game engines. The next step beyond that is to have real physical lighting in virtual production. The only way that is actually going to happen is with ray tracing. Once you start doing full ray tracing in realtime with real global illumination and lighting coming out of real things then it is going to get closer to looking like the final frame. At that point you might be able to shoot things live, and that is going to happen. Maybe not this year, but very soon.”

Nic Hatch, CEO, Ncam

“The journey that we’re all in now with virtual production is how do we make it easy, achievable, affordable, scalable and repeatable? Part of that is the rendering engine inside of it. You can see the effort that Epic is putting into this space, which is phenomenal, and we work closely with them. But there are other bits of technology required to make virtual production a reality for everyone. What is required for the future and will open up a huge amount of possibilities is depth per pixel in real-time. When the technology

does not just understand the X and Y coordinates but the Z coordinate as well, then things become a lot more flexible. You can start to automate various processes that today are simply manual.

“As a filmmaker, we shouldn’t care whether it’s real or not when looking through the lens. It just looks real. The same [is true] with visual effects if that’s what you’re trying to do. I don’t think this means post-production goes away. It means that the heavy lifting and planning are done upfront. If you want to make changes afterwards you can certainly do that. There is no degradation in the data. Ultimately, what post-production will turn into will be tweaking, not design; this is a good thing because that is where it

can go quite wrong, especially with re-shoots.”

Ben Grossmann, Co-Founder and CEO, Magnopus

“Everyone’s been salivating over Unreal Engine 5, and if that hits in 2021, it’s going to be a madhouse. The shortage of skilled realtime artists and engineers has been a major point of friction for filmmakers the last couple years. There are just more shows that need real-time artists than there are in the market. Fortunately, there’s a huge investment being made to train skilled labor, like the Unreal Fellowship.

“LED walls will grow to be a critical part of filmmaking, but can’t do everything; filmmakers still haven’t adjusted to the reality that they need to build great assets before shooting on a LED wall. Mobile devices will be shipping with LiDAR scanners. Filmmakers can start collecting the parts of the world they want to use, dropping them into game engines, and making the movies they want with less technical skills required. Plus, they can use those same sensors for performance capture, so I expect that is a pretty big leap forward in democratizing filmmaking.

“What I’d like to see is major advancements in applying deep learning and AI to solving a lot of the friction points at scale. However, everyone is so focused on making presentations and white papers that they aren’t producing tech people can use. I’d also like to see USD become ubiquitous finally, so we can work on materials and shaders next. COVID will force a lot of remote collaboration and that’s going to force the major studios to rethink their security requirements. Expect a lot of focus on ‘moving

the studio system into the cloud’ [not at the vendor level, but above that]. It only makes sense that you consolidate the entire film production pipeline so that you can optimize storage and rendering resources.”

Rachel Rose, R&D Supervisor, ILM

“This is a really exciting time for virtual production in our industry. While virtual production has had a long history with early uses on films such as The Lord of the Rings: The Fellowship of the Ring and A.I. Artificial Intelligence, technology in this space is currently advancing rapidly. These advancements have a wide range of filmmakers excited about embracing the latest new workflows.

“Throughout 2021, we should see films and TV shows fully adopting the latest techniques for in-camera virtual production using LED stages, like what we have done at ILM on The Mandalorian. Our immersive StageCraft LED volumes with perspective-correct, real-time rendered imagery allow for final pixels to be captured in-camera while providing realistic lighting and reflections on foreground elements. As more traditional filmmakers get involved in virtual production, techniques that combine real-time technology with physical camera equipment will spread rapidly, like what we did with Gore Verbinski on Rango and what Jon Favreau did in VR on The Lion King.

“All of these virtual production workflows will advance further throughout the year as technology continues to develop. Expect to see more use of real-time ray tracing capabilities in the latest graphics cards. In practice, this means that virtual production previsualization and on-set capture will allow for more dynamically-adjustable, realistic lighting, including soft shadows and quality reflections. And new innovative software features for multi-user workflows will connect key creatives in a wide range of locations, allowing some collaborative virtual production work to happen seamlessly from a distance.”