By TREVOR HOGG

By TREVOR HOGG

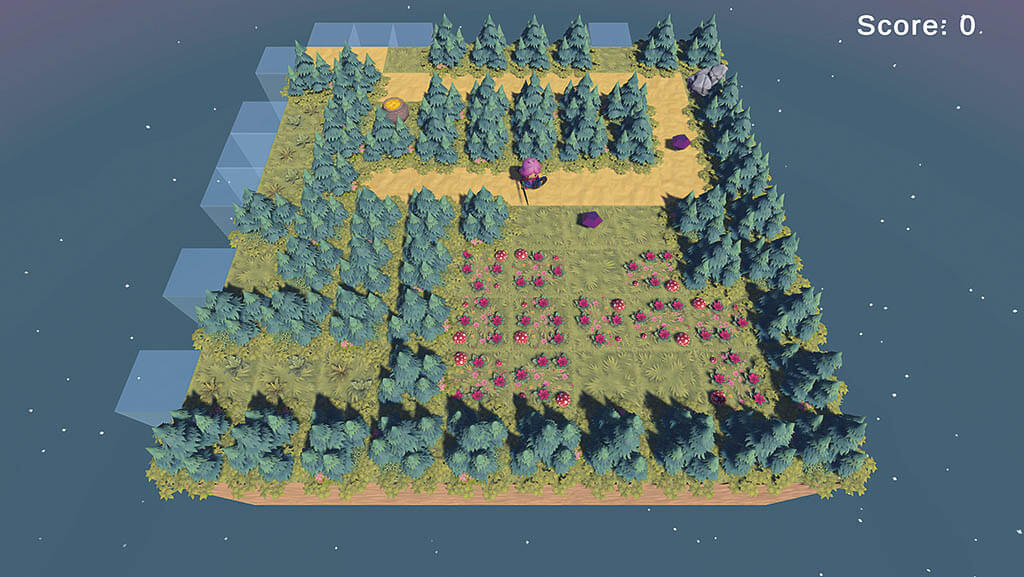

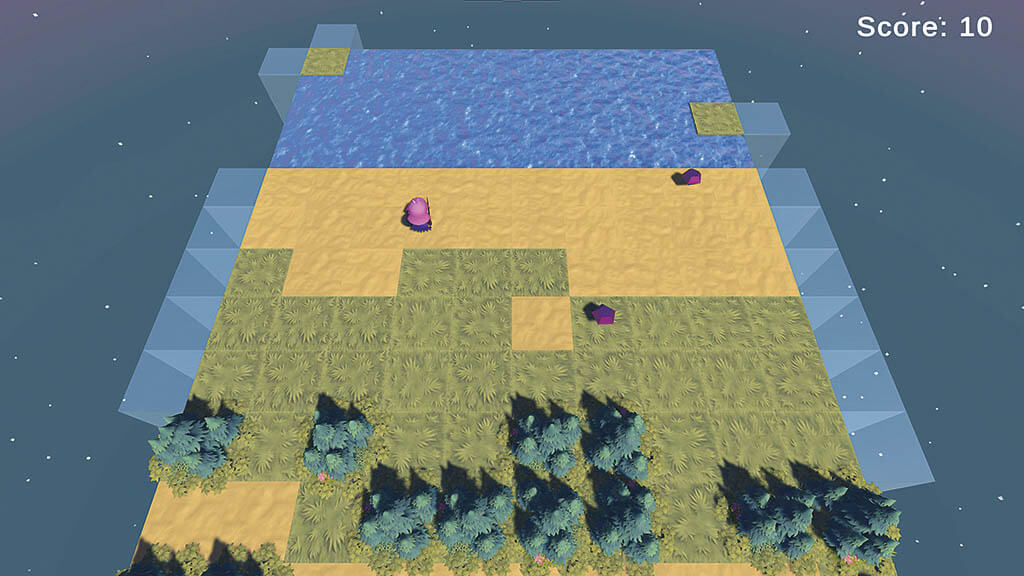

For the longest time, AI has been associated with non-player characters (NPC) in the video game industry and divided between control structures and path-finding. “If you are in the chasing state, you’re using a path-finding algorithm to try to find the shortest path to the player character,” explains Julian Togelius, Associate Professor of Computer Science and Engineering at NYU and Co-Founder and Research Director, modl.ai. “If you are in the patrolling state, you are basically executing a fixed set of moves. Traditionally, you have all of these simple, symbolic AI techniques that have been there in one form or another since the 1980s. This is because when most modern game genres were developed, like role-playing games, first-person shooters and strategy games, we didn’t have modern AI algorithms, and we certainly didn’t have computers that could run them.”

Togelius believes that AI is going to cause even further fragmentation in game development. “There will be designers who look at the new AI methods and say, ‘This doesn’t help me with the kind of games that I do.’ Other designers will go, ‘Hey, let’s start from the new AI methods, design around that and see what new things we get.’ Basically, what we call a game will expand even more in different ways.”

Open research and development initiative Ubisoft La Forge has been exploring applications of machine learning in video games productions for over a decade. “AI works best when there is a lot of data available that represent the tasks you’re targeting,” states Yves Jacquier, Executive Director of Ubisoft La Forge. “As such, repetitive tasks are particularly suited to be generalized and automated.” By using motion capture data to train AI models, realisticand fluid character animation will be generated. Jacquier explains, “AI also has the potential to assist in automating the animation workflow, reducing the time and effort required by animators. A good example for that is Choreograph, a technology developed by La Forge and used in Far Cry 6, which helped create the animations in the game through an AI-driven technique known as ‘motion matching.’ Another prototype we developed at La Forge is Zoobuilder, which helps create 3D animations for animals from videos using machine learning. We’ve been using generative AI in voice synthesis, DeepMotion, text-to-speech and now Ghostwriter, our in-house tool created to assist scriptwriters. We’re seeing fast progress in terms of adoption internally in areas like ideation or concept art. We expect a similar trend in programming with assistance for writing code or finding the potential source of a bug and proposing a fix. We can expect the use of AI assistants to rise and progressively become an integral part of our everyday routine, going beyond basic functions to handle intricate tasks, such as assisting with coding and executing complex commands.”

Unity views AI tools as a means to further the democratization of the video game industry. “Muse Chat has helped the creation process that starts with natural language which is a great place for all humans because that’s how we communicate,” notes Ralph Hauwert, Senior Vice President/GM, Unity Editor, Runtime, Ecosystems & AI/ML at Unity. “Being able to ask Unity, ‘How do I make a Match 3 game?’ It lists it out in steps for you. Getting that type of help accelerates creators on whatever part of their journey, whether they’re a starter, intermediate or expert. Sentis is quite an innovation from the perspective of what it intends to do. Building a neural network is already hard enough, but then deploying them to all the different devices with all different kinds of accelerators and APIs, that gets complicated and expensive for a small team of game developers. What Sentis does is be the runtime on the device that allows you to deploy one of your neural networks. Essentially, it’s a cost reduction and enables you to use the silicone that’s inside all of these devices to the best of their abilities. Right now, if you want to run a neural network on an IOS or Android device you’re already needing to make two different versions. Sentis takes care of that problem for you.”

“With games you spend 10% to 20% of the game development budget testing it. It’s a huge weight limiter because testing games is actually boring because you do the same thing again and again. The beautiful thing is, this is the kind of stuff that everybody is okay with being automated – giving AI instructions on how to test the game, what to look for and what specifically to explore.”

—Julian Togelius, Associate Professor of Computer Science and Engineering at NYU and Co-Founder and Research Director, modl.ai.

In 2019, NVIDIA introduced Deep Learning Super Sampling (DLSS) which is an AI model trained on NVIDIA supercomputers that fills in detail and improves performance. “Since then, over 300 games and applications have adopted this technology, and we’ve continued to improve our AI rendering capabilities with the introduction of DLSS 3, which generates entire frames and multiplies performance,” remarks Ike Nnoli, Senior Product Marketing Manager at NVIDIA. “This success has inspired others in the gaming industry to create their own performance-enhancing solutions. By offloading rendering work to AI, DLSS has allowed developers to do more in their games, from pushing graphical fidelity to increasing geometric density. Beyond rendering, we’re spearheading generative AI technologies in gaming production pipelines. The launch of ChatGPT has created a surge in applications powered by Stable Diffusion and large language models [LLMs]. We’ve built NVIDIA Audio2Face for voice-to-facial animation, Picasso for text-to-3D assets and Avatar Cloud Engine [ACE] for games for intelligent NPCs. This is an area of significant R&D as generative AI will change how games are made.”

Automatic game testing is the most logical place for AI. “With games, you spend 10% to 20% of the game development budget testing it,” Togelius states. “It’s a huge weight limiter because testing games is actually boring because you do the same thing again and again. The beautiful thing is, this is the kind of stuff that everybody is okay with being automated – giving AI instructions on how to test the game, what to look for and what specifically to explore. Also, you can use deep learning when it comes to upscaling a game or making a different visual style, like anime or a 1960s Disney movie.

Then there is improved player modeling and capturing playing styles. This gives you a lot of examination for how to tune your game.”

Continues Togelius, “We’re going to get better building environment-generation, which we’ve had since the 1980s with Rogue and more recently with No Man’s Sky. Generative AI provides a wealth of generating game levels that are controllable, fit particular players or player types and have specific challenges. It used to be 10 to 15 years ago that all of the animation was simulated and used every time. As we’ve gotten better at procedural animation, you get more fluidity. Finally, what everybody is talking about right now is not so easy – the dialogue tree through large language models, where we can have real dynamic dialogue. We are going to have technical advancements that will make large language models more controllable and less likely to say random words. We are also going to need to design around the fact that large language models are fundamentally unreliable.”

“There will be designers who look at the new AI methods and say, ‘This doesn’t help me with the kind of games that I do.’ Other designers

will go, ‘Hey, let’s start from the new AI methods, design around that and see what new things we get.’ Basically, what we call a game will expand even more in different ways.”

—Julian Togelius, Associate Professor of Computer Science and Engineering at NYU and Co-Founder and Research Director, modl.ai.

One should not forget that AI is a tool. “The challenge is to make sure that we learn how to integrate this potential to create meaningful experiences beyond being a mere gadget,” Jacquier states. “As a player, you want to explore a world and feel that each character and each situation is unique, and that involves a vast variety of characters in different moods and with different backgrounds. As such, there is a need to create many variations to any mundane situation, such as one character buying fish from another in a market. A writer tasked with writing 20 variations for each of those situations might come up with a handful of examples before the task might become tedious. This is where Ghostwriter kicks in: proposing NPC dialogues and their variations to a writer gives the writer more variations to work with and more time to polish the most important narrative elements. This can help create even more believable worlds for players to explore, with the potential for unlocking more complex procedural narratives for all NPCs, no matter how ancillary they are to the main story. This is a great example of how generative AI can assist without sacrificing the narrative integrity of our games.”

“NVIDIA ACE for games is our custom AI model foundry, which brings a level of intelligence to game characters previously not seen before,” Nnoli remarks. “Traditionally, player interactions with NPCs have been transactional, scripted and short-lived. Now, generative AI can make NPCs more conversational with persistent personalities that evolve over time and that are unique to the individual player. The process to deploy intelligent NPCs is very different from the NPCs today with their preset dialogue choices. Developers will need to run LLMs, customized with game-specific lore, through a cloud service or PC in real-time. And, they’ll need to have facial animation that adapts to dynamic language in real-time and speech that sounds lifelike – all while ensuring conversations are on topic.” The creation of NPCs has become more sophisticated, Nnoli says. “The number of prerecorded lines has grown.

The number of options a player has to interact with NPCs has increased, and facial animations have become more realistic. Gamers’ expectations when it comes to narrative decision-making continue to rise, and AI can significantly multiply the number of interactions that a user has with a story and allow for quicker injections of new narratives [through LLMs like NVIDIA NeMo and ChatGPT].”

Creating aesthetic variations is part of the heritage of Unity. “If one of the things is that you need to have 100 variations of the best sword,” Hauwert explains. “You have already trained against your own art style, and we allow you to easily generate another 100 more. You can start building variations based on that depth of how the game ecosystem works and being able to control that as well. Then we think about gameplay and how you can learn from others. How cool is it if you could play against the AI of one of your favorite streamers on Twitch? Or instead of ghost racers you could play against an interactive version of that player that has already been. We’re still at the beginning of that journey.” Unity has established partnerships with Leonardo.ai, Replica Studios and Inworld AI. “It is all about building up this ecosystem of AI tools, but also classifying them clearly for our community as opportunities of engagement.” The technology alone is not enough, Hauwert declares. “What we’re learning is how to make the optimal UX that sits within a workflow when it comes to using it. Then, there are all of these fun thresholds that creators need to cross to be able to even target this or be able to use it. We are learning a lot from the creators that now have access to our data and are using Muse Chat, for example, and telling us how it is or is not useful.”

Whereas technology is generally designed to allow individuals to achieve, there is also a sense that more checks and balances need to be put into place. “By lowering the barrier to entry for content creation, there is going to be an increasing influx of content that automatically calls for stronger moderation and safeguards to prevent the creation of things we don’t want to see in games,” Jacquier observes. “If implemented right, AI has the potential to significantly change the way games are developed and played, with obvious positives for both game developers and players: more immersive game worlds and diverse and rich characters; more engaging or challenging gameplay; personalization of the experience and AI-based user-generated content; more believable world components like fluids, fire or smoke simulations; and overall production efficiency at the service of faster creative workflows.” Fear of AI becoming sentient and making a human workforce obsolete is misguided. “AI is a tool,” Togelius states. “But as with every tool, it gives you new performances. If you just try to fit a new tool into an old design and workflows, you’re going to be limited.” Unity starts with the creator rather than the technology. “We will always be on the side of creators as we deploy this,” Hauwert remarks. “For me, AI will accelerate the entire game industry to be more creative.”