By IAN FAILES

By IAN FAILES

When visual effects artists build computer-generated creatures, characters or environments, they certainly don’t do things by halves. And that means the resulting CG assets tend to be, well, complicated. Here, a range of modelers, texture painters, riggers, lighters, FX and lookdev artists as well as crowd, animation and CG supervisors break down how they made some of their most complex assets in recent times.

A HUMAN-LIKE CREATURE MADE UP OF… HUMAN-LIKE CREATURES

The miniseries Lisey’s Story sees the emergence of a gigantic human-like creature realized by Scanline VFX called ‘The Long Boy,’ made up of ‘countless suffering souls.’ Artists began a sculpt, as modeler Kurtis Dawe states, “by splitting it into parts and tackling the individual corpses first. We made five different types of corpses with varying body shapes that were distributed randomly to make up The Long Boy’s body. A big challenge was also finding a clever way to use these individual corpses to construct his different body parts in a way that could function.”

The animation department painstakingly posed the low-resolution rigs of individual corpses and elements to match the sculpt. “The Long Boy rig, with low-resolution static corpses, was used by animation in each shot,” says Crowd Department Supervisor Sallu Kazi. “For the final round of animation, the rig was pushed through a Golaem plugin in Maya, which would read the data stored on the rig, recreate the exact poses and layer the motion from the animation library, including facial performances, on top of each individual corpse. The final rig had 590 individual corpses, 486 heads, 480 arms and three legs.”

Performance capture, in addition to keyframe animation, also came into play, according to Animation Supervisor Mattias Brunosson. “It was a way to quickly collect a wide variety of motions for the corpses to be able to match what The Long Boy was emoting in the scene, from frantic, painful convulsions to more subdued motions. We then created a library of cycles that both the animators and the crowd department used to give life to all the corpses making up The Long Boy. Facial capture was used for the corpses as well. The performances were adjusted and edited to fit with the cycles we created for the bodies.”

Reference that showed malnourishment and decay on human bodies was used to guide texturing. “The blood on the corpses had a deep red color tone and a matte quality, as plenty of time has passed and the blood on the corpses would have dried up,” explains CG Supervisor Pablovsky Ramos-Nieves. “We referenced images of burned corpses and added that look below the layer of blood. The skin had to look and feel like flesh, so those parts had more of a glossy and reflective sheen that allowed for the creature to receive nice highlights from the moonlight. We also dressed up some of the corpses with damaged and weathered outfits. Finally, we added an extra layer of wet and dried mud to add more color variation as it dragged its body over the ground.”

Interaction with plant life required FX simulations from Scanline. “We wanted to achieve precise interaction with the moving bodies, each leaf and blade of grass,” notes FX supervisor Marcel Kern. “In shots where the creature is moving quickly through the forest, we used a custom FX rig to push down all the grass and break every tree in his path. We also created skin deformation simulations to have a transition from The Long Boy’s body to all the corpses attached to him.”

CREATING CARNAGE

DNEG’s Carnage asset for Venom: Let There Be Carnage needed to facilitate high-octane fight scenes, shape-shifting and extensive tentacle movement. “Like any other complex asset, a simple start with a very strong foundation is key,” notes Lead Modeler/Sculptor Lucas Cuenca. “Carnage was built like an action figure where you are able to interchange arms, legs and tentacles.”

To sculpt Carnage, Cuenca says he started with human anatomy but made it very distorted. “The muscles didn’t need to be attached to the bones; instead, they were attached to other muscles, sometimes shaped like bones. The sculpture had to sell the idea that Carnage was able to shift shapes, add weapons to the arms and extrude tentacles out of any part of the body, but at the same time he had to feel strong and have a structure to him.”

The Carnage animation rig was built in a modular way to aid in the morphing nature of the character, as Animation Supervisor Ricardo Silva details. “The base is a standard human rig, but then he also has all these extra parts that can be loaded and attached when needed. The rig had a lot of flexibility with unconventional deformations like stretching and scale for bone joints. This was mostly used for the transformation shots where Carnage’s shape and size had to conform to Cletus for the morphing effect.”

“Our rigging/creature FX team also created a Ziva simulation rig,” adds CG Supervisor Eve Levasseur-Marineau. “This took into account his unconventional exposed musculature and allowed us to both introduce a layer of dynamics but also relax any issue in the topology introduced by the extreme poses. Our tentacle rig was completely overhauled for the show to make it much more versatile and faster for the animation team to work with.”

DNEG integrated Houdini Engine into its Carnage Maya rig approach, enabling the animators to load a tentacle rig and create the performance needed by visualizing a simple cylinder shape. “We would just turn on the OTL node and have a very accurate representation of how things look like coming out of Houdini,” explains Silva. “We had a list of attributes that the animators can adjust in the OTL and see the changes in real-time. Once the shot was approved, it was baked down and sent to Houdini where the FX artist picked up the same attributes and ran a more refined simulation with extra detail.”

“As animation cached the branching tentacles,” continues Levasseur-Marineau, “the relevant attributes would get included and ingested by the FX team, who would use it as a base to add further noise and deformation to create the organic look of the deformation, add a layer of veins flowing across the tentacles, a layer of translucent membrane goo and a connective goo between the different layers, emphasizing the symbiotic feel of the tentacles.”

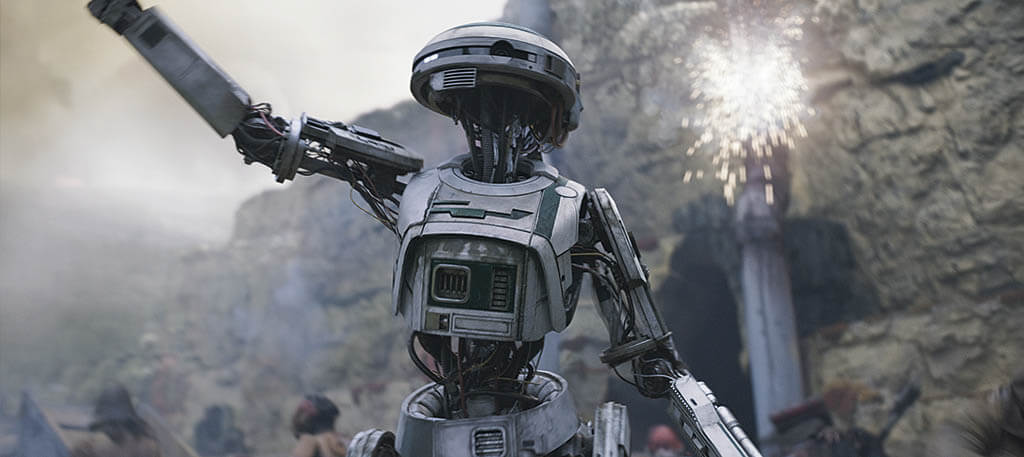

DROID DESIGNS

Solo: A Star Wars Story featured L3-37, a character brought to life on set by actor Phoebe Waller-Bridge wearing partial practical pieces of the droid. Industrial Light & Magic (ILM) also crafted L3 entirely in CG, sharing final shots with Hybride.

“We had a high-resolution LiDAR scan of L3 and also a detailed set of photos taken at 360 degrees, plus different angles for maximum coverage for both the costume and also the life-size prop that production had constructed,” explains ILM Senior Modeler Gerald Blaise. “First, I had to model the life-size prop of L3, matching every element of it with exacting precision, prioritizing the parts from the costume and then the mechanical parts. Once that was done, I imported the scan of Phoebe Waller-Bridge to compare and evaluate how it sat on her body when she was wearing it and how it interacted with her when standing in various poses.”

From there, Blaise reverse-engineered all the possible mechanical movements that would be needed for L3 to perform and also for VFX artists to ultimately replace Waller-Bridge’s visible body parts with the droid parts. “We went back and forth with our rigging department to test the movements, make adjustments where necessary, and to check the range of motion to ensure we were staying true to the range of movement that Phoebe was actually able to achieve on set in the physical costume,” says Blaise.

L3 is constructed from various astromech parts, so Blaise studied different droids from across the Star Wars saga for reference in the build. Maya was used for modeling and Keyshot to add some base shading materials before the model was texture painted. “This would give me a rough first representation of it to compare to the real costume. From there, I could make sure all of the edges and thickness of each part felt right in an environment.”

After the droid was modeled, rigged and textured, it was shared with Hybride for shot production. Sharing models has indeed become so much more common within the VFX industry. “Outside of the technicality of texture color pipeline and shading to match the look we created for her,” outlines Blaise, “Hybride also had to ingest our specialized rig and learn the way it was used in order to ‘drive’ L3. Both the ILM animators and the team at Hybride did a terrific job with L3, and it was a real pleasure seeing her come to life on screen in totality, after many dailies sessions watching our pieces replace the green spandex elements of Phoebe’s costume and match seamlessly to the hard surface part of the L3 costume.”

RENDERING THE RED ROOM

The airborne Red Room seen in the finale of Black Widow was a Digital Domain creation, with the studio crafting a structure made of 355 exterior CG assets, and then another 700 CG assets, including debris, for its crash to earth. The hallmarks of the Red Room design are several arms connected to a massive central tower.

“The arms house airstrips, fuel modules, solar panels and cargo,” outlines CG Supervisor Ryan Duhaime. “Details like ladders, doorways and railings were designed and added to maintain a sense of scale. We also established two hero arms that required high-res geometry to integrate seamlessly with the live-action footage by matching LiDAR scans for the physical set piece runways, hallways and containment cells. We then instanced the individual assets, like beams, scaffolding and flooring by combining them into layouts.”

“We had the arms and the props on them divided into multiple sections, which allowed for greater control in what we would see that was left behind as the destruction took place,” continues Lead Layout Artist Natalie Delfs. “There is also a ton of movement throughout the ship during the sequence, so we knew we would need to build a wide variety of layouts for different areas inside and out of the ship as the location of the action changed. This included building damaged and undamaged layouts for multiple areas.”

Texturing of the Red Room was established in Substance Painter starting with five main materials. “The number of shaders increased as we went, based on the level of detail and complexity,” says texture artist Bo Kwon. “We also created separate grungy shaders to be added on top of our base layers. Using these smart materials gave us a fast turnaround of assets to build the foundation, and to decide the look of the Red Room. For the closeup shots and hero assets, we then imported those maps into Mari to add more details.”

Lookdev occurred concurrently to texturing, as Lookdev Lead James Stuart describes. “We looked into the Redshift material and the components which we could base all of our lookdevs on. In the end, we decided to utilize a metalness workflow from Substance. The Red Room is mostly made up of metallic objects, so we created base materials for bare metal, painted metal, rust and scratches. Once we found the look we wanted with these materials, we were able to provide baseline values for all the components we needed from textures, including diffuse, specular roughness, metalness and bump.”

Lighting was deceptively simple, suggests Lighting Lead Tim Crowson, “but ultimately required a lot of fine-tuning at the shot level. Our DFX Supervisor, Hanzhi Tang, captured a very high-dynamic-range image of a sunset, which we used as the basis for our exterior lighting. We split this into a couple of lights to maintain artistic control over fill and key, and established this as our starting point for shot lighting. The other vital component to selling this kind of high-altitude, high-speed traversal was the use of dynamic shadows from surrounding objects, sometimes cast by clouds, sometimes by falling debris. It was imperative to include shadowing from these surrounding objects to sell the sense of speed and integrate our CG elements fully.”

Overall, Digital Domain used a wealth of tools for the Red Room, as Duhaime observes. “We utilized Foundry’s Nuke for our compositing needs; Houdini’s Mantra for clouds, dust, fire, smoke and initial FX destruction renders; Houdini for our FX dev, CFX groom and cloth simulations; V-Ray for our hero character digital doubles; Redshift for hard-surface objects like the Red Room, vehicles and the destruction renders; Substance Painter and Mari for our texture painting needs; and Maya for our general modeling, animation, lookdev, lighting and layout setups.”